译 - In Search of an Understandable Consensus Algorithm (Extended Version)

《In Search of an Understandable Consensus Algorithm (Extended Version)》 直译过来就是 《寻找可理解的共识算法(扩展版)》,这篇文章中详细介绍了 Raft 算法的设计初衷以及其主要的设计实现,这是一篇学习共识算法的必读的一片论文。

摘要

Raft is a consensus algorithm for managing a replicated log. It produces a result equivalent to (multi-)Paxos, and it is as efficient as Paxos, but its structure is different from Paxos; this makes Raft more understandable than Paxos and also provides a better foundation for building practical systems. In order to enhance understandability, Raft separates the key elements of consensus, such as leader election, log replication, and safety, and it enforces a stronger degree of coherency to reduce the number of states that must be considered. Results from a user study demonstrate that Raft is easier for students to learn than Paxos. Raft also includes a new mechanism for changing the cluster membership, which uses overlapping majorities to guarantee safety.

Raft 是一种用来管理日志复制的共识算法。它的性能和 (multi-)Paxos 是一样的,并且和Paxos一样高效,但是它的结构和 Paxos 不一样;这使得 Raft 更容易理解并且也为构建实用系统提供了更好的基础。为了增强可理解性,Raft 将共识算法分为了几个部分,例如领导选取(leader selection),日志复制(log replication)和安全性(safety),同时它使用了更强的一致性来减少了必须要考虑的状态数量。从用户学习的结果来看,Raft 比 Paxos 更容易学习。Raft 还包括了一种新的机制来动态改变集群成员,它使用重叠大多数(overlapping majorities)来保证安全。

1、介绍

Consensus algorithms allow a collection of machines to work as a coherent group that can survive the failures of some of its members. Because of this, they play a key role in building reliable large-scale software systems. Paxos [15, 16] has dominated the discussion of consensus algorithms over the last decade: most implementations of consensus are based on Paxos or influenced by it, and Paxos has become the primary vehicle used to teach students about consensus.

共识算法允许一群机器像一个整体一样工作,即使其中的一些成员发生故障也不会出现问题。基于这一点,它在构建可靠的大规模软件系统的过程中起着关键的作用。Paxos[15, 16]一直主导着过去十年间对共识算法的讨论:许多共识算法的实现都是以Paxos为基础或者受到它的影响,并且Paxos已经成为了用来教授学生关于共识算法的主要工具。

Unfortunately, Paxos is quite difficult to understand, in spite of numerous attempts to make it more approachable. Furthermore, its architecture requires complex changes to support practical systems. As a result, both system builders and students struggle with Paxos.

不幸的是,Paxos太难以理解了,尽管已经做了很多尝试想使它变得更加平易近人。并且,为了方便构建实际的系统,它的结构也需要做出非常复杂的改变。因此,系统架构师和学生都对Paxos感到很痛苦。

After struggling with Paxos ourselves, we set out to find a new consensus algorithm that could provide a better foundation for system building and education. Our approach was unusual in that our primary goal was understandability: could we define a consensus algorithm for practical systems and describe it in a way that is significantly easier to learn than Paxos? Furthermore, we wanted the algorithm to facilitate the development of intuitions that are essential for system builders. It was important not just for the algorithm to work, but for it to be obvious why it works.

在我们和Paxos经历了一番痛苦挣扎之后,我们开始寻找一种新的共识算法来为系统的构建和教学提供更好的基础。我们的首要目标比较特殊,为了让它更加易于理解:我们能否为实际的系统构建一个比Paxos更加易于理解的共识算法?此外,我们希望该算法能够培养系统构建者的开发直觉。而这对系统构建者是必不可少的。重要的是不仅算法要起作用,而且要清楚它为什么会起作用。

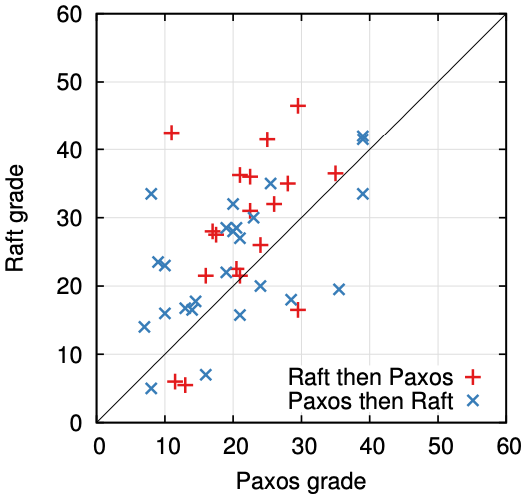

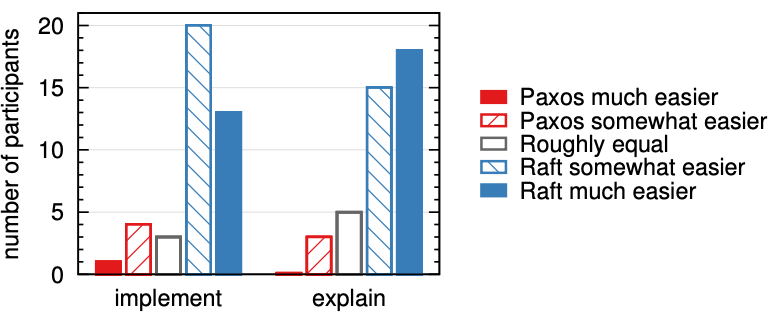

The result of this work is a consensus algorithm called Raft. In designing Raft we applied specific techniques to improve understandability, including decomposition (Raft separates leader election, log replication, and safety) and state space reduction (relative to Paxos, Raft reduces the degree of nondeterminism and the ways servers can be inconsistent with each other). A user study with 43 students at two universities shows that Raft is significantly easier to understand than Paxos: after learning both algorithms, 33 of these students were able to answer questions about Raft better than questions about Paxos.

这项工作的结果就是一个叫做Raft的共识算法。在设计Raft的时候,我们使用了特定的技术来提高可理解性,包括分割(Raft分离了领导者选举、日志复制和安全性)以及状态空间的减少(和Paxos相比,Raft降低了不确定性的程度以及服务器之间数据不一致的方式)。在对两所大学的43名学生的用户调研后表明,Raft比Paxos更加容易理解。在同时学习了两种方法之后,其中的33名学生回答Raft的问题要比回答Paxos的更好。

Raft is similar in many ways to existing consensus algorithms (most notably, Oki and Liskov’s Viewstamped Replication [29, 22]), but it has several novel features:

Raft在很多方面和现存的共识算法类似,但是它也有以下这些独特的特性:

- Strong leader: Raft uses a stronger form of leadership than other consensus algorithms. For example, log entries only flow from the leader to other servers. This simplifies the management of the replicated log and makes Raft easier to understand.

- Leader election: Raft uses randomized timers to elect leaders. This adds only a small amount of mechanism to the heartbeats already required for any consensus algorithm, while resolving conflicts simply and rapidly.

- Membership changes: Raft’s mechanism for changing the set of servers in the cluster uses a new joint consensus approach where the majorities of two different configurations overlap during transitions. This allows the cluster to continue operating normally during configuration changes.

- 强领导者:Raft使用了比其他共识算法更强的领导形式。例如,日志条目只能从领导者流向其他服务器。这简化了对复制日志的管理,并使Raft更易于理解。

- 领导者选举:Raft使用随机的时钟来选举领导者。这只是在共识算法原有的心跳检测的基础上增加了少量的特殊机制。使得冲突解决变得更加简单快速。

- 成员变更:Raft使用了一种新的联合共识的方法来处理集群成员变更的问题,处于调整过程中的两种不同的配置集群中大多数机器会有重叠,这就使得集群在成员变换的时候依然可以继续工作。

We believe that Raft is superior to Paxos and other consensus algorithms, both for educational purposes and as a foundation for implementation. It is simpler and more understandable than other algorithms; it is described completely enough to meet the needs of a practical system; it has several open-source implementations and is used by several companies; its safety properties have been formally specified and proven; and its efficiency is comparable to other algorithms.

我们相信不论是用于教学还是作为系统实现的基础,Raft都要优于Paxos和其他的共识算法。它比其他算法更简单也更加易于理解;它能完全满足实际系统的需求;它有很多开源的实现并且被很多公司使用;它的安全性已经被完全证实了;并且它的效率也完全可以和其他算法相媲美。

The remainder of the paper introduces the replicated state machine problem (Section 2), discusses the strengths and weaknesses of Paxos (Section 3), describes our general approach to understandability (Section 4), presents the Raft consensus algorithm (Sections 5–8), evaluates Raft (Section 9), and discusses related work (Section 10).

本文的第2章介绍了状态机的相关问题,第3章描述了Paxos的优缺点,第4章介绍了我们达成可理解性目标的一些方法,第5到8章详细介绍了 Raft 共识算法,第9章描述了对Raft的评估,第10章讨论了Raft相关一些成果。

2、复制状态机

Consensus algorithms typically arise in the context of replicated state machines [37]. In this approach, state machines on a collection of servers compute identical copies of the same state and can continue operating even if some of the servers are down. Replicated state machines are used to solve a variety of fault tolerance problems in distributed systems. For example, large-scale systems that have a single cluster leader, such as GFS [8], HDFS [38], and RAMCloud [33], typically use a separate replicated state machine to manage leader election and store configuration information that must survive leader crashes. Examples of replicated state machines include Chubby [2] and ZooKeeper [11].

共识算法是在复制状态机[37]的背景下提出来的。在这个方法中,一组服务器上的状态机对同一个状态计算并产生多个完全相同的副本,这使得即使其中一些服务器崩溃了,这组服务器也还可以继续正常运行。复制状态机通常用于解决分布式系统中容错相关的一系列问题。例如,GFS[8],HDFS[38], RAMCloud[33],这些拥有单一集群领导者的大规模应用系统,会使用一个独立的复制状态机来管理领导选取及存储集群配置信息来应对领导者的崩溃。复制状态机典型的例子包括 Chubby[2] 和 ZooKeeper[11]。

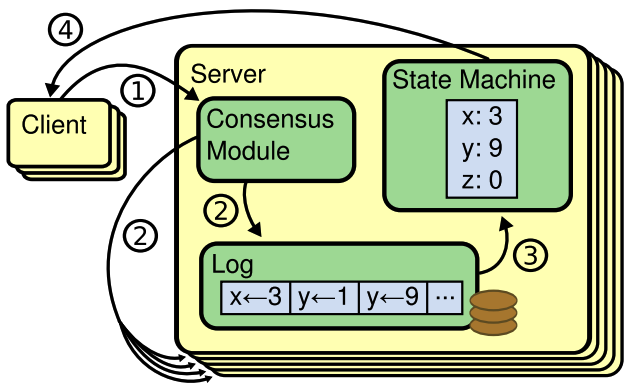

The consensus algorithm manages a replicated log containing state machine commands from clients. The state machines process identical sequences of commands from the logs, so they produce the same outputs.

共识算法管理着一个复制日志,其中包含来自客户端的状态机命令。状态机负责处理日志中相同的命令序列,因此它们会产生相同的输出。

Replicated state machines are typically implemented using a replicated log, as shown in Figure 1. Each server stores a log containing a series of commands, which its state machine executes in order. Each log contains the same commands in the same order, so each state machine processes the same sequence of commands. Since the state machines are deterministic, each computes the same state and the same sequence of outputs.

如图 1 所示,复制状态机通常使用复制日志来实现。每台服务器存储一份包含一系列命令的日志,内部状态机依照日志中的命令顺序执行。因为每台机器的状态机都是确定的,所以计算将得到同样的状态和输出结果。

Keeping the replicated log consistent is the job of the consensus algorithm. The consensus module on a server receives commands from clients and adds them to its log. It communicates with the consensus modules on other servers to ensure that every log eventually contains the same requests in the same order, even if some servers fail. Once commands are properly replicated, each server’s state machine processes them in log order, and the outputs are returned to clients. As a result, the servers appear to form a single, highly reliable state machine.

共识算法的任务就是保证复制日志的一致性。服务器上的共识模块,接收来自客户端的命令,并追加到日志中。它和其它服务器上的共识模块进行通信,确保每一个服务器上的日志都包含相同顺序的相同请求,即使其中的一些服务宕机了。一旦命令被正确地复制,每个服务器的状态机就会按日志顺序处理它们,并将输出返回给客户机。结果就是服务器似乎形成了一个单一的、高度可靠的状态机。

Consensus algorithms for practical systems typically have the following properties:

实际应用中的共识算法通常具有以下特性:

- They ensure safety (never returning an incorrect result) under all non-Byzantine conditions, including network delays, partitions, and packet loss, duplication, and reordering.

- They are fully functional (available) as long as any majority of the servers are operational and can communicate with each other and with clients. Thus, a typical cluster of five servers can tolerate the failure of any two servers. Servers are assumed to fail by stopping; they may later recover from state on stable storage and rejoin the cluster.

- They do not depend on timing to ensure the consistency of the logs: faulty clocks and extreme message delays can, at worst, cause availability problems.

- In the common case, a command can complete as soon as a majority of the cluster has responded to a single round of remote procedure calls; a minority of slow servers need not impact overall system performance.

- 确保非拜占庭情况下的安全性(从来不会返回一个错误的结果),包括网络的延迟、分区及数据包的丢包、冗余和乱序情况。

- 只要集群主体中的大多数机器能够运行,并且可以相互通信和与客户机通信,这个集群就可用。因此,一个拥有 5 台机器的集群最多可以容忍其中的 2 台的宕机。假定服务器因停止而发生了故障;它们可能稍后就会恢复稳定存储状态并重新加入集群。

- 不依赖于时间来确保日志的一致性:错误的时钟和极端的消息延迟在最坏的情况下会导致可用性的问题。

- 通常情况下,只要集群的大多数成员响应了一轮远程过程调用,命令就可以完成;少数慢速服务器不会影响总体的系统性能。

3、Paxos有什么问题?

Over the last ten years, Leslie Lamport’s Paxos protocol [15] has become almost synonymous with consensus: it is the protocol most commonly taught in courses, and most implementations of consensus use it as a starting point. Paxos first defines a protocol capable of reaching agreement on a single decision, such as a single replicated log entry. We refer to this subset as single-decree Paxos. Paxos then combines multiple instances of this protocol to facilitate a series of decisions such as a log (multi-Paxos). Paxos ensures both safety and liveness, and it supports changes in cluster membership. Its correctness has been proven, and it is efficient in the normal case.

在过去的十年中,Leslie Lamport的Paxos协议[15]几乎成为了共识算法的代名词:它是授课中最常讲授的算法,同时也是许多共识算法实现的起点。Paxos首先定义了一个能够就单个决策达成一致的协议,例如单个复制的日志条目。我们将这个子集称为单一决策 Paxos。然后,Paxos将该协议的多个实例组合起来从而形成一系列决策,例如日志(multi-Paxos)。Paxos既保证了安全性又保证了活跃性,同时它支持集群成员角色的变更。它的正确性已被证明了并且在一般的情况下也被证明是高效的。

Unfortunately, Paxos has two significant drawbacks. The first drawback is that Paxos is exceptionally difficult to understand. The full explanation [15] is notoriously opaque; few people succeed in understanding it, and only with great effort. As a result, there have been several attempts to explain Paxos in simpler terms [16, 20, 21]. These explanations focus on the single-decree subset, yet they are still challenging. In an informal survey of attendees at NSDI 2012, we found few people who were comfortable with Paxos, even among seasoned researchers. We struggled with Paxos ourselves; we were not able to understand the complete protocol until after reading several simplified explanations and designing our own alternative protocol, a process that took almost a year.

不幸的是,Paxos有两个显著的缺点。第一个是 Paxos 太难以理解。众所周知,它的完整说明是出乎寻常的晦涩,很少有人能够完全理解它。因此,人们曾多次尝试用更简单的术语来解释Paxos[16,20,21]。虽然它们都侧重于single-decree subset的版本,但是仍然非常具有挑战性。在一项针对NSDI 2012与会者的非正式调查中,我们发现很少有人对Paxos感到舒服,即使是那些有着丰富经验的研究人员。我们自己也对Paxos感到非常痛苦,我们在阅读了几个简化版的描述以及设计了我们自己的替代协议后才能够理解完整的协议,而这整个过程持续了将近一年。

We hypothesize that Paxos’ opaqueness derives from its choice of the single-decree subset as its foundation. Single-decree Paxos is dense and subtle: it is divided into two stages that do not have simple intuitive explanations and cannot be understood independently. Because of this, it is difficult to develop intuitions about why the singledecree protocol works. The composition rules for multiPaxos add significant additional complexity and subtlety. We believe that the overall problem of reaching consensus on multiple decisions (i.e., a log instead of a single entry) can be decomposed in other ways that are more direct and obvious.

我们认为Paxos的晦涩来源于它将single-decree subset作为自己的基础。Single-decree Paxos被认为是微妙的:它被划分为两个阶段,它们没有简单直观的解释,也不能被独立理解。因此,这就导致了很难对single-decree协议是如何工作的进行联想。而multi-Paxos的组成规则(composition rule)则更加添加了复杂性。我们认为,就多项决策达成共识的总体问题(即,一个日志而不是一个条目)可以用其他更直接、更明显的方式来分解。

The second problem with Paxos is that it does not provide a good foundation for building practical implementations. One reason is that there is no widely agreedupon algorithm for multi-Paxos. Lamport’s descriptions are mostly about single-decree Paxos; he sketched possible approaches to multi-Paxos, but many details are missing. There have been several attempts to flesh out and optimize Paxos, such as [26], [39], and [13], but these differ from each other and from Lamport’s sketches. Systems such as Chubby [4] have implemented Paxos-like algorithms, but in most cases their details have not been published.

Paxos的第二个问题是它没有为构建实际的实现提供良好的基础。一大原因是multi-Paxos没有一个广受认可的算法。Lamport的描述主要针对的是single-decree Paxos;它为multi-Paxos提供了一个大概的框架,但是很多细节并没有提及。对于充实以及优化Paxos已经做了很多努力,例如[26]、[39]和[13],但是它们各自之间,以及和Lamport的概述都不相同。像Chubby这样的系统已经实现了类Paxos算法,但是它的很多细节并没有公开。

Furthermore, the Paxos architecture is a poor one for building practical systems; this is another consequence of the single-decree decomposition. For example, there is little benefit to choosing a collection of log entries independently and then melding them into a sequential log; this just adds complexity. It is simpler and more efficient to design a system around a log, where new entries are appended sequentially in a constrained order. Another problem is that Paxos uses a symmetric peer-to-peer approach at its core (though it eventually suggests a weak form of leadership as a performance optimization). This makes sense in a simplified world where only one decision will be made, but few practical systems use this approach. If a series of decisions must be made, it is simpler and faster to first elect a leader, then have the leader coordinate the decisions.

此外,Paxos的架构也不利于构建实际系统;这是它按single-decree分解的另一个后果。例如,独立地选取一系列的日志条目并且将它们融合成一个顺序的日志并没有太多好处,仅仅只是增加了复杂度。相反,构建一个围绕按顺序扩展日志的系统是更简单和高效的。Paxos的另一个问题是它将对称的点对点(peer-to-peer)作为核心(虽然在最后为了优化性能建议了一种弱领导者形式)。这在只需要做一个决策的简单场景中是可行的,但是很少有实际的系统会使用这种方法。如果需要进行一系列的决策,那么先选择一个领导者,然后再让领导者去协调决策会更加简单快捷。

As a result, practical systems bear little resemblance to Paxos. Each implementation begins with Paxos, discovers the difficulties in implementing it, and then develops a significantly different architecture. This is timeconsuming and error-prone, and the difficulties of understanding Paxos exacerbate the problem. Paxos’ formulation may be a good one for proving theorems about its correctness, but real implementations are so different from Paxos that the proofs have little value. The following comment from the Chubby implementers is typical:

因此,实际构建出的系统与Paxos几乎没有相似之处。每个实现都从Paxos开始,然后发现实现起来很困难,于是最后开发出了一个完全不同的架构。这是极其费时并且容易出错的,而Paxos的难以理解则更加加剧了这个问题。Paxos的正确性理论很好证明,但是实际的实现和Paxos太过不同,因此这些证明就没什么价值了。接下来这段来自Chubby的评论是非常典型的:

- There are significant gaps between the description of the Paxos algorithm and the needs of a real-world system. . . . the final system will be based on an unproven protocol [4].

- Paxos算法的描述和现实世界的系统需求之间有着巨大的矛盾…而最终实现的系统都将建立在一个未经证明的协议之上[4]。

Because of these problems, we concluded that Paxos does not provide a good foundation either for system building or for education. Given the importance of consensus in large-scale software systems, we decided to see if we could design an alternative consensus algorithm with better properties than Paxos. Raft is the result of that experiment.

因为这些问题的存在,我们得出这样的结论,Paxos并没有为实际系统的构建或者是教学提供一个很好的基础。基于在大规模软件系统中共识的重要性,我们决定尝试能否设计出另外一种比Paxos有着更好性质的共识算法。而Raft就是我们实验得到的结果。

4、可理解性设计

We had several goals in designing Raft: it must provide a complete and practical foundation for system building, so that it significantly reduces the amount of design work required of developers; it must be safe under all conditions and available under typical operating conditions; and it must be efficient for common operations. But our most important goal—and most difficult challenge—was understandability. It must be possible for a large audience to understand the algorithm comfortably. In addition, it must be possible to develop intuitions about the algorithm, so that system builders can make the extensions that are inevitable in real-world implementations.

我们在设计Raft的时候有以下几个目标:它必须为系统构建提供一个完整并实际可行的基础,这将大大减少系统开发者的设计工作;它必须在任何情况下都能够确保安全性,并且保证在典型应用场景下的可用性;它在通常的应用操作中必须是高效的。另外,最重要的一点,也是最具挑战性的一点是它必须易于理解,从而使广大的读者能够很好的理解这个算法。 并且要能够培养出对这个算法的直觉(develop intuitions),从而让系统构建者能够在实际实现中做出必要的扩展。

There were numerous points in the design of Raft where we had to choose among alternative approaches. In these situations we evaluated the alternatives based on understandability: how hard is it to explain each alternative (for example, how complex is its state space, and does it have subtle implications?), and how easy will it be for a reader to completely understand the approach and its implications?

在设计Raft的很多节点上,我们需要在很多可选方法之间做出选择。在这些情况下,我们基于可理解性来评估这些方法:每一个可选方案的描述是否困难(比如,它的状态空间的复杂度是多少,以及它是否有其他的理解歧义?)以及读者是否能轻松地完全理解这种方法。

We recognize that there is a high degree of subjectivity in such analysis; nonetheless, we used two techniques that are generally applicable. The first technique is the well-known approach of problem decomposition: wherever possible, we divided problems into separate pieces that could be solved, explained, and understood relatively independently. For example, in Raft we separated leader election, log replication, safety, and membership changes.

后来我们意识到这种分析方法具有很强的主观性;于是我们使用了两种方法让分析变得更具通用性。第一种是众所周知的问题分解方法:在可能的情况下,我们将问题划分为可以相对独立地解决、解释和理解的部分。 例如,在Raft中,我们分离了领导者选举、日志复制、安全性和成员变更。

Our second approach was to simplify the state space by reducing the number of states to consider, making the system more coherent and eliminating nondeterminism where possible. Specifically, logs are not allowed to have holes, and Raft limits the ways in which logs can become inconsistent with each other. Although in most cases we tried to eliminate nondeterminism, there are some situations where nondeterminism actually improves understandability. In particular, randomized approaches introduce nondeterminism, but they tend to reduce the state space by handling all possible choices in a similar fashion (“choose any; it doesn’t matter”). We used randomization to simplify the Raft leader election algorithm.

我们的第二种方法是通过减少要考虑的状态来简化状态空间,使系统更加一致,并且在可能的情况下消除不确定性。 具体来说,日志不允许存在空洞,Raft限制了日志之间存在不一致的可能。尽管在大多数情况下,我们试图消除不确定性,但在某些情况下,不确定性实际上提高了可理解性。特别是随机化方法引入了不确定性,但是它们倾向于通过以类似的方式处理所有可能的选择来减少状态空间(选择哪一个并不重要)。我们使用随机化来简化了Raft的领导者选举算法。

5、Raft公式算法

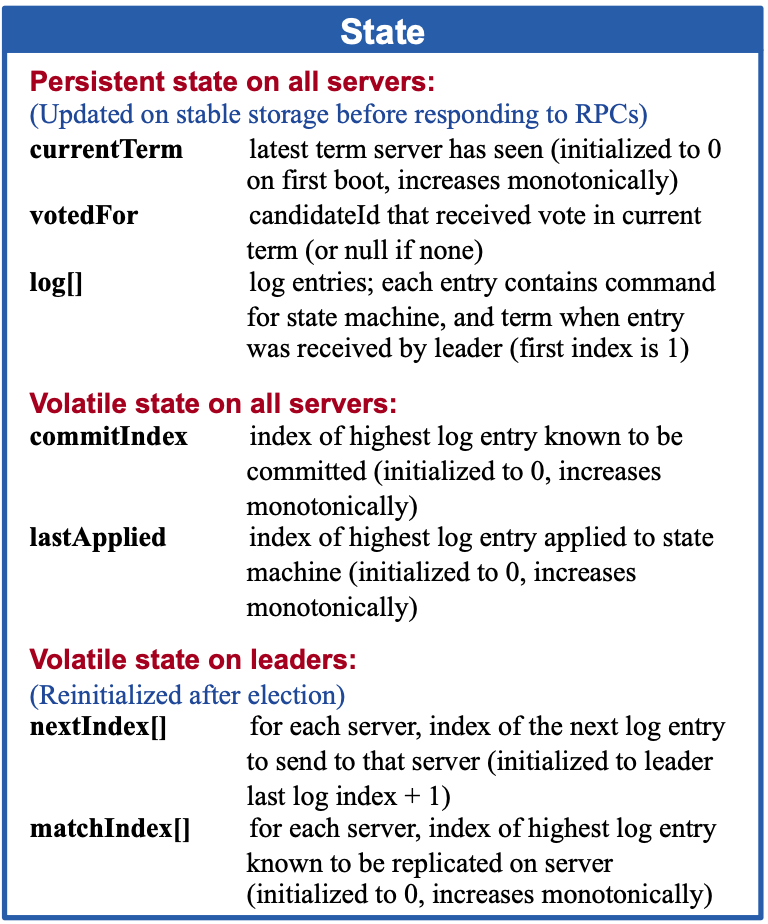

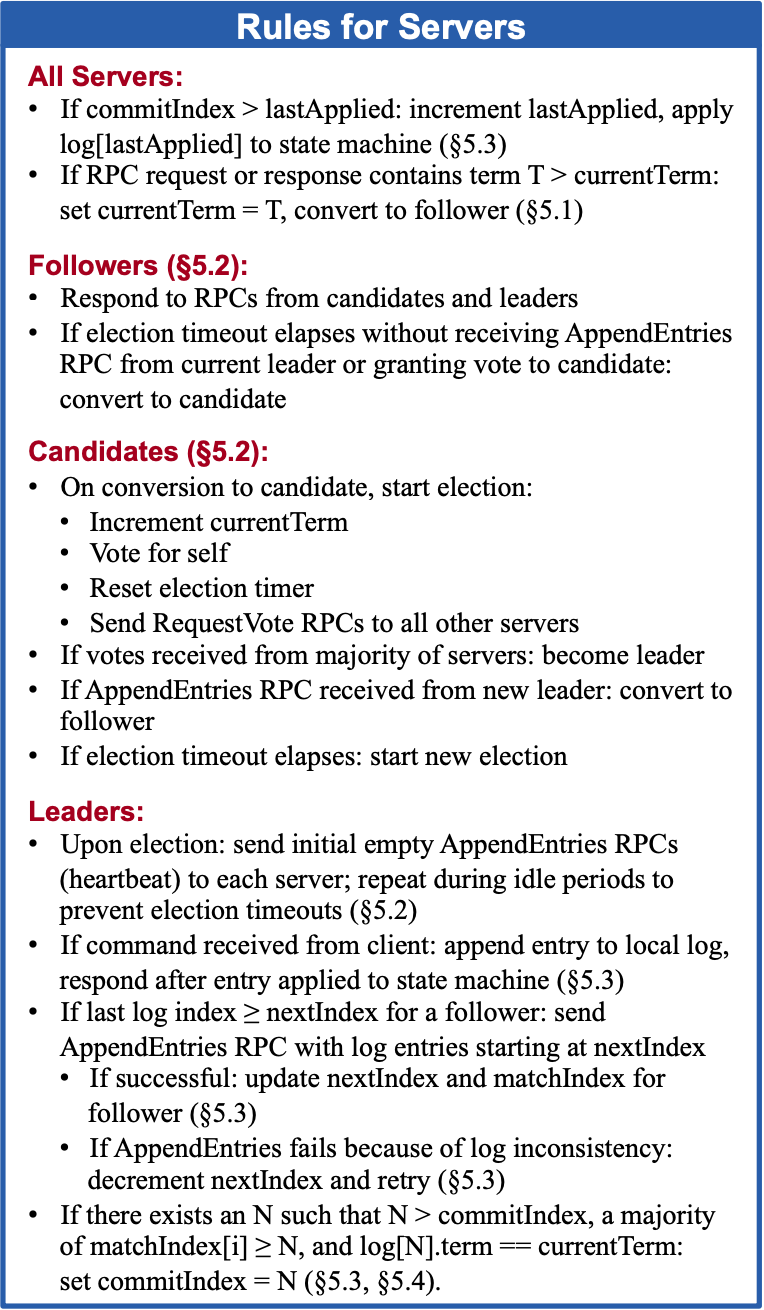

Raft is an algorithm for managing a replicated log of the form described in Section 2. Figure 2 summarizes the algorithm in condensed form for reference, and Figure 3 lists key properties of the algorithm; the elements of these figures are discussed piecewise over the rest of this section.

Raft是用于管理文章第二部分描述的复制日志算法。图2是对Raft的简要描述;图3罗列了算法的一些重要属性;接下来将会对图示部分进行分段讨论。

Figure 2: A condensed summary of the Raft consensus algorithm (excluding membership changes and log compaction). The server behavior in the upper-left box is described as a set of rules that trigger independently and repeatedly. Section numbers such as §5.2 indicate where particular features are discussed. A formal specification [31] describes the algorithm more precisely.

图2:Raft共识算法的简明摘要(不包括成员变更和日志压缩)。左上框中的服务器行为被描述为一组独立且可重复触发的规则。例如第5.2节指明讨论特定特征的位置。形式规范[31]更精确地描述了该算法。

Figure 3: Raft guarantees that each of these properties is true at all times. The section numbers indicate where each property is discussed.

图3:Raft保证这些属性在任何时候都是正确的。章节号是讨论每个属性的位置。

Raft implements consensus by first electing a distinguished leader, then giving the leader complete responsibility for managing the replicated log. The leader accepts log entries from clients, replicates them on other servers, and tells servers when it is safe to apply log entries to their state machines. Having a leader simplifies the management of the replicated log. For example, the leader can decide where to place new entries in the log without consulting other servers, and data flows in a simple fashion from the leader to other servers. A leader can fail or become disconnected from the other servers, in which case a new leader is elected.

Raft首先选举出一个唯一的领导者来实现共识,并赋予完全的管理复制日志的责任。领导者接收来自客户端的日志条目并复制到其它服务器,同时将日志条目追加到自身日志,然后告知其他服务器可以应用日志条目到状态机。领导者大大简化了日志复制的管理。例如,领导者可以自主决定日志条目的追加位置,数据以一种简单的方式从领导者流向其它服务器。领导者可能会宕机或与其他服务器断开连接,在这种情况下,将要选出新的领导者。

Given the leader approach, Raft decomposes the consensus problem into three relatively independent subproblems, which are discussed in the subsections that follow:

鉴于领导者方法,Raft将共识问题分解为三个相对独立的子问题,这些子问题将在下面的小节中讨论:

- Leader election: a new leader must be chosen when an existing leader fails (Section 5.2).

- Log replication: the leader must accept log entries from clients and replicate them across the cluster, forcing the other logs to agree with its own (Section 5.3).

- Safety: the key safety property for Raft is the State Machine Safety Property in Figure 3: if any server has applied a particular log entry to its state machine, then no other server may apply a different command for the same log index. Section 5.4 describes how Raft ensures this property; the solution involves an additional restriction on the election mechanism described in Section 5.2.

- 领导者选举:现有的领导者宕机后将选举新的领导者(第5.2节)。

- 日志复制:领导者必须能够接受来自客户端的日志条目,并复制到集群中的其它服务器,强制其他服务器的日志与自己的日志保持一致(第5.3节)。

- 安全性:Raft的关键安全性属性是图3中的状态机的安全性属性:如果任何服务器已将特定的日志条目应用于其状态机,那么其他服务器都不能对同一个日志索引应用不同的命令。第5.4节描述了Raft如何确保该特性;解决方案中包括了对第5.2节所述选举机制的额外限制。

After presenting the consensus algorithm, this section discusses the issue of availability and the role of timing in the system.

在介绍了共识算法之后,本节讨论了可用性问题以及时机(the role of timing)在系统中的作用。

5.1、Raft基础

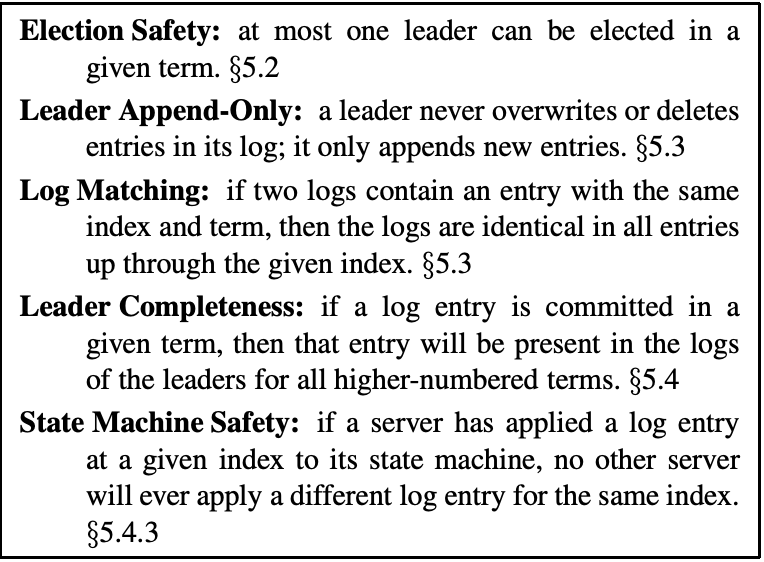

A Raft cluster contains several servers; five is a typical number, which allows the system to tolerate two failures. At any given time each server is in one of three states: leader, follower, or candidate. In normal operation there is exactly one leader and all of the other servers are followers. Followers are passive: they issue no requests on their own but simply respond to requests from leaders and candidates. The leader handles all client requests (if a client contacts a follower, the follower redirects it to the leader). The third state, candidate, is used to elect a new leader as described in Section 5.2. Figure 4 shows the states and their transitions; the transitions are discussed below.

一个 Raft 集群包括若干个服务器;对于一个典型的拥有 5 个服务器的集群来说,最多能够容忍 2 台服务器宕机。集群运行期间,服务器都会处于三个状态之中:领导者(Leader) 、跟随者(Follower)、候选者(Candidate)。正常情况下,只有一个服务器处于领导者状态,其它的都是跟随者。跟随者是被动的:他们不发送任何请求,只是简单的响应来自领导者和候选者的请求。领导者来处理所有来自客户端的请求(如果一个客户端与跟随者进行通信,跟随者会将请求信息转发给领导者)。候选者是用来选取新的领导者的。图-4 阐述了这些状态及它们之间的转换。

Figure 4: Server states. Followers only respond to requests from other servers. If a follower receives no communication, it becomes a candidate and initiates an election. A candidate that receives votes from a majority of the full cluster becomes the new leader. Leaders typically operate until they fail.

图4:服务器状态。跟随者只响应来自其他服务器的请求。如果一个追随者没有收到任何信息,它就会成为一个候选人并发起选举。一个获得集群中多数选票的候选者会成为新的领导者。领导者通常会一直工作直到宕机。

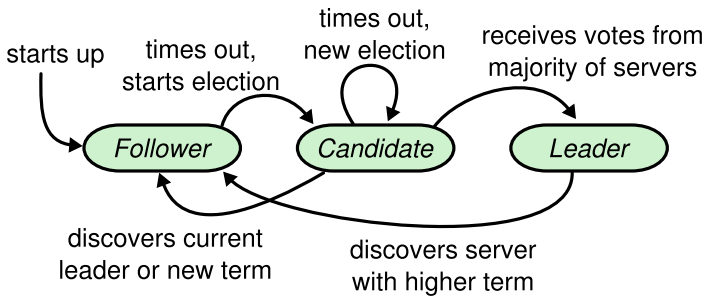

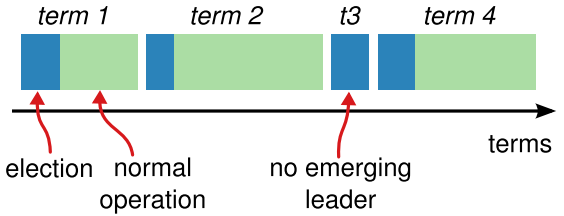

Raft divides time into terms of arbitrary length, as shown in Figure 5. Terms are numbered with consecutive integers. Each term begins with an election, in which one or more candidates attempt to become leader as described in Section 5.2. If a candidate wins the election, then it serves as leader for the rest of the term. In some situations an election will result in a split vote. In this case the term will end with no leader; a new term (with a new election) will begin shortly. Raft ensures that there is at most one leader in a given term.

Raft将时间划分为任意长度的任期(Term),如图5所示。任期用连续的整数来命名。每一个任期的选举开始时,一名或多名候选者会试图成为第5.2节所述的领导者。如果候选者在选举中获胜,那么它将在余下的任期内担任领导者。在某些情况下,选举会导致分裂投票。在这种情况下,任期将以无领导者而结束;新的任期会随即开始。Raft确保在给定的任期内最多有一个领导者。

Figure 5: Time is divided into terms, and each term begins with an election. After a successful election, a single leader manages the cluster until the end of the term. Some elections fail, in which case the term ends without choosing a leader. The transitions between terms may be observed at different times on different servers.

图5:时间被划分为任期,每届任期以选举开始。在一次成功的选举之后,只有一位领导者能够管理这个集群直到任期结束。在某些选举失败的情况下,任期结束时没有选出领导者。可以在不同的服务器上,在不同的时间段内观察转换过程。

Different servers may observe the transitions between terms at different times, and in some situations a server may not observe an election or even entire terms. Terms act as a logical clock [14] in Raft, and they allow servers to detect obsolete information such as stale leaders. Each server stores a current term number, which increases monotonically over time. Current terms are exchanged whenever servers communicate; if one server’s current term is smaller than the other’s, then it updates its current term to the larger value. If a candidate or leader discovers that its term is out of date, it immediately reverts to follower state. If a server receives a request with a stale term number, it rejects the request.

可以观察到不同的服务器会在不同的时间进行任期(Term)的转换。在某些情况下,甚至在整个任期中,服务器可能不会观察到选举。任期(Term)作为Raft的逻辑时钟[14],它们允许服务器检测过期的信息,例如过期的领导者。每个服务器都存储着一个当前任期数字,数字随任期单调递增,服务器间通信时会相互交换任期信息。如果一个服务器的任期信息比其它的服务器小,它就会更新自己的任期到当前较大的任期。如果领导者或者候选者发现自己的任期信息已经过期,那么它们会立即转换状态为跟随者。当一个服务器收到一个包含过期的任期信息的请求时,会拒绝这个请求。

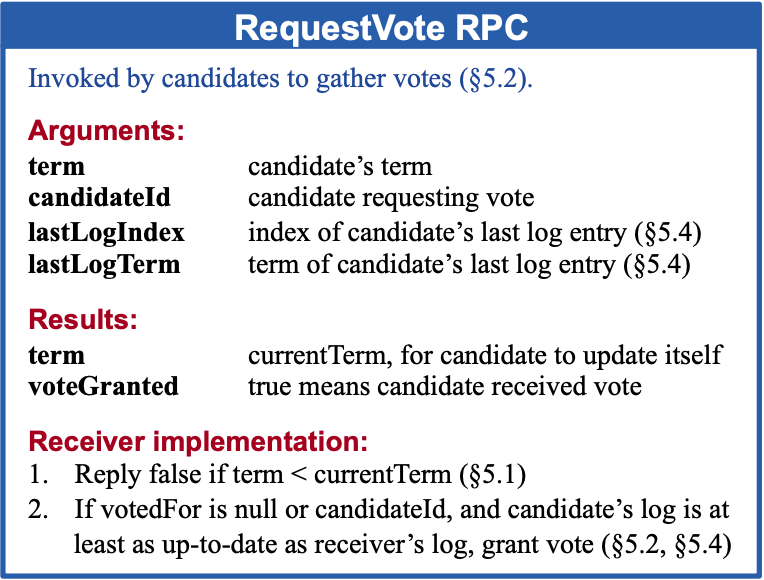

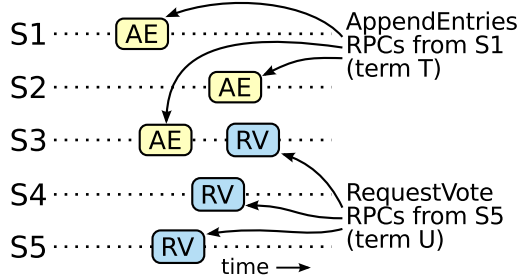

Raft servers communicate using remote procedure calls (RPCs), and the basic consensus algorithm requires only two types of RPCs. RequestVote RPCs are initiated by candidates during elections (Section 5.2), and AppendEntries RPCs are initiated by leaders to replicate log entries and to provide a form of heartbeat (Section 5.3). Section 7 adds a third RPC for transferring snapshots between servers. Servers retry RPCs if they do not receive a response in a timely manner, and they issue RPCs in parallel for best performance.

Raft服务器间通过RPC方式进行通信,基础的共识算法只需要两种类型的RPC:请求投票RPCs(RequestVote RPCs) 是候选者在选举期间发起的,并在选举时使用(第5.2节)和 复制日志条目 RPCs(Append Entries RPCs) 是由领导者发起的,在复制日志及心跳检测时使用(第5.3节)。第7节中新增了用于 在服务器之间传输快照的第三个RPC。如果服务器没有及时收到响应,则会重试RPC,通过并行发出RPC以获得最佳性能。

5.2、领导者选举

Raft uses a heartbeat mechanism to trigger leader election. When servers start up, they begin as followers. A server remains in follower state as long as it receives valid RPCs from a leader or candidate. Leaders send periodic heartbeats (AppendEntries RPCs that carry no log entries) to all followers in order to maintain their authority. If a follower receives no communication over a period of time called the election timeout, then it assumes there is no viable leader and begins an election to choose a new leader.

Raft使用心跳机制来触发领导者选举。当服务器启动时,它们会处于跟随者的状态。并且保持这种状态直到接收到来自领导者或者候选者的合法RPCs。领导者会定期向所有追随者发送心跳信号(AppendEntries RPCs 是不携带日志条目的RPC),以保持它们的领导者地位。如果一个跟随者在选举超时的时间内没有收到任何通信,那么它就假定没有有效的领导者,并开始新一轮的选举以选择出新的领导人。

To begin an election, a follower increments its current term and transitions to candidate state. It then votes for itself and issues RequestVote RPCs in parallel to each of the other servers in the cluster. A candidate continues in this state until one of three things happens: (a) it wins the election, (b) another server establishes itself as leader, or © a period of time goes by with no winner. These outcomes are discussed separately in the paragraphs below.

为了开始进行选举,跟随者会增加其当前任期并切换到候选者状态。然后它为自己投票,并向集群中的其他服务器并行发出请求投票请求(RequestVote RPCs)。候选者会一直保持自身状态,直到以下三种情况中的任何一种发生:(a)它赢得了选举,成为了领导者(b)其他候选者赢得了领导者的地位,(c)选举超时,未能成功的选出领导者。这些结果将在下文各段中单独讨论。

A candidate wins an election if it receives votes from a majority of the servers in the full cluster for the same term. Each server will vote for at most one candidate in a given term, on a first-come-first-served basis (note: Section 5.4 adds an additional restriction on votes). The majority rule ensures that at most one candidate can win the election for a particular term (the Election Safety Property in Figure 3). Once a candidate wins an election, it becomes leader. It then sends heartbeat messages to all of the other servers to establish its authority and prevent new elections.

如果候选者在同一任期内收到来自整个集群中大多数服务器的投票,那么它将赢得选举。每个服务器都按照先到先服务的原则投出它有且仅有一个的选票。多数原则确保了在特定的任期内最多有一个候选者能够赢得选举(图3中的选举安全属性)。一旦候选者赢得选举,它就成为了领导者。然后,它向其他的所有服务器发送心跳消息,告知自身的领导者状态并阻止新的选举。

While waiting for votes, a candidate may receive an AppendEntries RPC from another server claiming to be leader. If the leader’s term (included in its RPC) is at least as large as the candidate’s current term, then the candidate recognizes the leader as legitimate and returns to follower state. If the term in the RPC is smaller than the candidate’s current term, then the candidate rejects the RPC and continues in candidate state.

在等待投票时,候选者可能会收到其他声称是领导者的复制日志条目 RPC( AppendEntries RPC)。如果领导者的任期(被包含在它的RPC请求中)和候选者的当前任期相同(或者大于候选者的任期),那么候选者就承认领导者是合法的,并且从候选者状态转换成跟随者的状态。如果RPC请求中的任期信息小于当前候选者的任期,当前候选者则会拒绝该RPC并且继续处于候选者状态。

The third possible outcome is that a candidate neither wins nor loses the election: if many followers become candidates at the same time, votes could be split so that no candidate obtains a majority. When this happens, each candidate will time out and start a new election by incrementing its term and initiating another round of RequestVote RPCs. However, without extra measures split votes could repeat indefinitely.

第三种可能的结果是候选者既没有赢得选举也没有输掉选举:如果很多跟随者同时成为候选者,选票就可能会被分割,最终可能是没有候选者获得多数票。当这种情况发生时,每一位候选者都将进入选举超时状态,之后通过增加它们的任期和发送新一轮的请求投票RPCs(RequestVote RPCs)来发起新一轮的选举。然而,如果不采用额外的措施,分裂的投票(split votes)将会无限的重复。

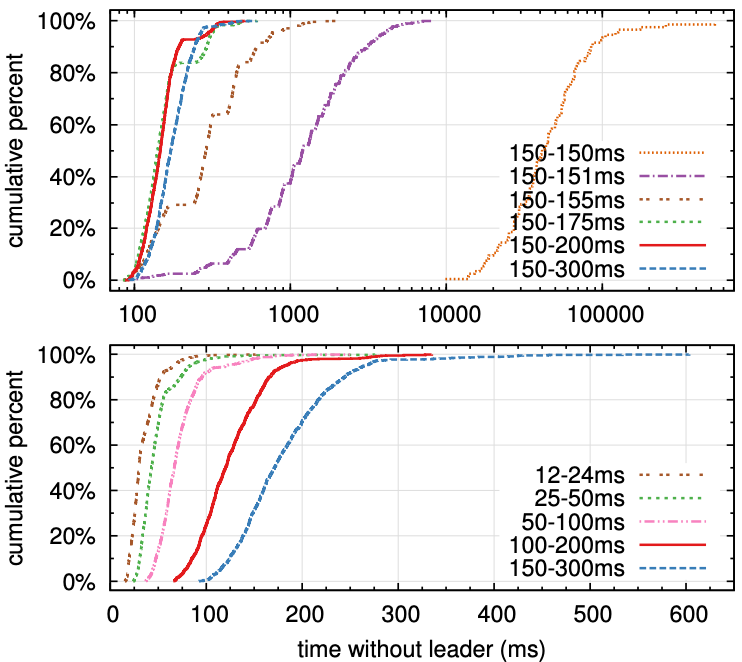

Raft uses randomized election timeouts to ensure that split votes are rare and that they are resolved quickly. To prevent split votes in the first place, election timeouts are chosen randomly from a fixed interval (e.g., 150–300ms). This spreads out the servers so that in most cases only a single server will time out; it wins the election and sends heartbeats before any other servers time out. The same mechanism is used to handle split votes. Each candidate restarts its randomized election timeout at the start of an election, and it waits for that timeout to elapse before starting the next election; this reduces the likelihood of another split vote in the new election. Section 9.3 shows that this approach elects a leader rapidly.

Raft使用随机的选举超时时间来确保分裂投票(split votes)很少发生,或者即使发生了也能很快的被解决。为了在一开始就避免分裂投票的发生,选举超时时间被设定为一个固定范围(例如150-300毫秒)中的随机值。这就能够使服务器很好的分散开来,确保在大多数场景下只会有一个服务器发生选举超时;当一个服务器赢得选举后,它能够在其他服务器选举超时之前向它们发送心跳信息。每一个候选者在选举开始时会重置一个随机的选举超时时间,然后等待超时时间的带来,之后再重新启动下一轮的选举,这就大大减少了下一次选举时分裂投票的情况发生。第9.3节表明,这种方法能够快速的选举出领导者。

Elections are an example of how understandability guided our choice between design alternatives. Initially we planned to use a ranking system: each candidate was assigned a unique rank, which was used to select between competing candidates. If a candidate discovered another candidate with higher rank, it would return to follower state so that the higher ranking candidate could more easily win the next election. We found that this approach created subtle issues around availability (a lower-ranked server might need to time out and become a candidate again if a higher-ranked server fails, but if it does so too soon, it can reset progress towards electing a leader). We made adjustments to the algorithm several times, but after each adjustment new corner cases appeared. Eventually we concluded that the randomized retry approach is more obvious and understandable.

选举这一个例子很好的说明了我们是如何根据可理解性做出设计选择的。设计之初,我们计划使用一个排名系统,每个候选者被分配一个唯一的排名,以用于候选者之间的竞争。如果一个候选者发现了另一个排名更高的候选者则会返回到跟随者状态,这样级别更高的候选者就可以很容易的赢得下一次选举。但是我们发现这种方法在可用性方面有一些小问题(当排名较高的服务器选举失败后,排名较低的服务器会等待选举超时的到来然后再次成为候选者,并开始新一轮的选举,但是如果排名较高的服务器又很快就失败了,那这就会影响领导者选举的进度)。我们对算法进行了很多次的调整,但每一次的调整都会引入新的问题。最终我们得出结论,随机重试这种方法更明确,也更易于理解。

5.3、日志复制

Once a leader has been elected, it begins servicing client requests. Each client request contains a command to be executed by the replicated state machines. The leader appends the command to its log as a new entry, then issues AppendEntries RPCs in parallel to each of the other servers to replicate the entry. When the entry has been safely replicated (as described below), the leader applies the entry to its state machine and returns the result of that execution to the client. If followers crash or run slowly, or if network packets are lost, the leader retries AppendEntries RPCs indefinitely (even after it has responded to the client) until all followers eventually store all log entries.

一旦一个领导者被选出,它就开始接受处理客户端的请求。每个客户端的请求都会包含一条需要状态机执行的命令。领导者将命令作为一个新条目追加到自身的日志中,然后并行的发送追加条目RPCs(AppendEntries RPCs)到其他的服务器进行日志条目的复制。当条目被安全的复制(如下所述)后,领导者将该条目应用于其状态机,并将执行结果返回给客户端。如果跟随者发生宕机,运行缓慢或网络数据包丢失等情况,领导者会无限次的重试发送AppendEntries RPCs,直到所有的跟随者都成功复制了所有的日志条目。

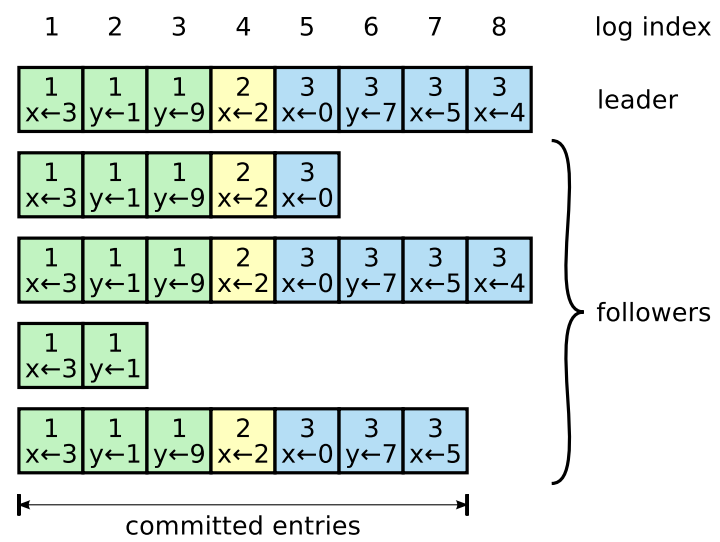

Figure 6: Logs are composed of entries, which are numbered sequentially. Each entry contains the term in which it was created (the number in each box) and a command for the state machine. An entry is considered committed if it is safe for that entry to be applied to state machines.

图6:日志由按顺序编号的条目组成。每个条目在被创建时都包含一个任期(term)(每个框中的数字)和一个状态机的命令。如果该条目可以安全地应用于状态机,则该条目被视为已提交。

Logs are organized as shown in Figure 6. Each log entry stores a state machine command along with the term number when the entry was received by the leader. The term numbers in log entries are used to detect inconsistencies between logs and to ensure some of the properties in Figure 3. Each log entry also has an integer index identifying its position in the log.

日志的存储形式如上图6所示。每个日志条目都存储着一条状态机命令和一个领导者接受条目时的任期号。日志条目中的任期号主要用于检测日志之间的不一致性,并确保图3中的某些属性。每一个日志条目都有一个整数索引,用于标识其在日志中的存储位置。

The leader decides when it is safe to apply a log entry to the state machines; such an entry is called committed. Raft guarantees that committed entries are durable and will eventually be executed by all of the available state machines. A log entry is committed once the leader that created the entry has replicated it on a majority of the servers (e.g., entry 7 in Figure 6). This also commits all preceding entries in the leader’s log, including entries created by previous leaders. Section 5.4 discusses some subtleties when applying this rule after leader changes, and it also shows that this definition of commitment is safe. The leader keeps track of the highest index it knows to be committed, and it includes that index in future AppendEntries RPCs (including heartbeats) so that the other servers eventually find out. Once a follower learns that a log entry is committed, it applies the entry to its local state machine (in log order).

领导者决定对状态机应用日志条目的安全时机,这样的条目称为提交(committed)。Raft保证提交后的条目是持久的,并且最终将被所有可用的状态机执行。当一个日志条目被集群中的大多数服务器成功复制后,它就会被领导者提交(例如图6中的条目7),这一个过程同时也会将此条目之前的所有日志条目一并提交,包括之前任期的领导者所创建的条目。第5.4节讨论了领导者发生变动后应用这个规则的微妙之处,同时也说明了这个承诺的定义是安全的。领导者会一直跟踪最新提交的日志条目索引,并将它包含在随后的Append Entries RPCs(包括心跳)中,以便其他服务器识别,并应用到自身状态机。

We designed the Raft log mechanism to maintain a high level of coherency between the logs on different servers. Not only does this simplify the system’s behavior and make it more predictable, but it is an important component of ensuring safety. Raft maintains the following properties, which together constitute the Log Matching Property in Figure 3:

我们设计了Raft的日志机制来保证不同服务器上日志之间的高度一致性。这不仅简化了系统的行为,提高了可预测性,并且也是确保安全的重要组成部分。Raft维护了以下特性,这些特性共同构成图3中的日志匹配特性:

- If two entries in different logs have the same index and term, then they store the same command.

- If two entries in different logs have the same index and term, then the logs are identical in all preceding entries.

- 如果不同日志中的两个条目具有相同的索引和任期号,则它们存储着相同的命令。

- 如果不同日志中的两个条目具有相同的索引和任期号,则之前所有的条目中的日志都是相同的。

The first property follows from the fact that a leader creates at most one entry with a given log index in a given term, and log entries never change their position in the log. The second property is guaranteed by a simple consistency check performed by AppendEntries. When sending an AppendEntries RPC, the leader includes the index and term of the entry in its log that immediately precedes the new entries. If the follower does not find an entry in its log with the same index and term, then it refuses the new entries. The consistency check acts as an induction step: the initial empty state of the logs satisfies the Log Matching Property, and the consistency check preserves the Log Matching Property whenever logs are extended. As a result, whenever AppendEntries returns successfully, the leader knows that the follower’s log is identical to its own log up through the new entries.

第一个特性表明,领导者在一个日志索引位置至多只会创建一个日志条目,并且日志中的条目位置都是固定的。第二个特性由AppendEntries执行的简单一致性检查来保证。在发送Append Entries RPCs时,领导者会将要发送的最新条目之前的条目索引(preLogIndex)及任期号(preLogTerm)包含进去,如果跟随者在其日志中找不到匹配的前置条目索引和前置任期号,则拒绝该日志条目。一致性检查执行符合递归特性:初始的空日志满足日志匹配属性(Log Matching Property),随着每一次日志扩充,一致性检查都确保符合Log Matching Property。因此,每当AppendEntries成功返回时,领导者就知道跟随者的日志在新通过的日志及之前的日志和自己的保持一致。

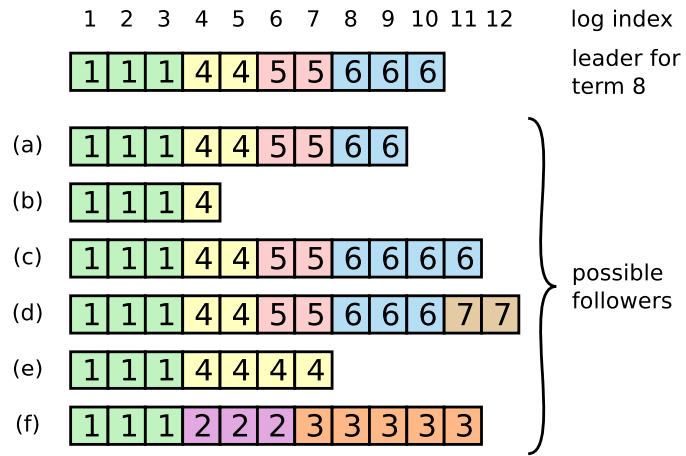

During normal operation, the logs of the leader and followers stay consistent, so the AppendEntries consistency check never fails. However, leader crashes can leave the logs inconsistent (the old leader may not have fully replicated all of the entries in its log). These inconsistencies can compound over a series of leader and follower crashes. Figure 7 illustrates the ways in which followers’ logs may differ from that of a new leader. A follower may be missing entries that are present on the leader, it may have extra entries that are not present on the leader, or both. Missing and extraneous entries in a log may span multiple terms.

正常情况下,领导者和跟随者的日志能够保持一致,因此一致性检查不会失败。但是,当领导者宕机后,就会出现日志不一致的情况(旧的领导者可能还会有一部分日志没来得及成功的复制给跟随者)。日志的不一致会随着一系列的领导者和跟随者的宕机而变得更加严重。图7 中展示了跟随者和新的领导者日志的不同之处。跟随者可能会缺少一些领导者中存在的日志条目,也有可能拥有一些领导者中不存在的日志条目,或者这两种情况都存在。日志中丢失的和无关的条目可能跨越多个任期。

Figure 7: When the leader at the top comes to power, it is possible that any of scenarios (a–f) could occur in follower logs. Each box represents one log entry; the number in the box is its term. A follower may be missing entries (a–b), may have extra uncommitted entries (c–d), or both (e–f). For example, scenario (f) could occur if that server was the leader for term 2, added several entries to its log, then crashed before committing any of them; it restarted quickly, became leader for term 3, and added a few more entries to its log; before any of the entries in either term 2 or term 3 were committed, the server crashed again and remained down for several terms.

图7:第一行的是领导者,跟随者可能有(a-f)几种场景。每个框代表一个日志条目;方框中的数字是它的任期。跟随者可能缺少条目(a–b),可能有额外的未提交条目(c–d),或者两者都有(e–f)。例如,场景(f)发生时,该服务器可能是任期2的领导者,在其日志中添加了几个条目后,然后在提交日志条目之前崩溃;它很快重新启动,然后成为了任期3的领导者,并在日志中添加了一些日志条目,在提交第2项或第3项中的日志条目之前再次宕机,并在接下来的几个任期内始终处于宕机状态。

In Raft, the leader handles inconsistencies by forcing the followers’ logs to duplicate its own. This means that conflicting entries in follower logs will be overwritten with entries from the leader’s log. Section 5.4 will show that this is safe when coupled with one more restriction.

在Raft中,领导者通过强制跟随者复制自己的日志来处理日志的不一致问题。这意味着,跟随者中不一致的日志条目会被领导者中的日志条目所覆盖。

To bring a follower’s log into consistency with its own, the leader must find the latest log entry where the two logs agree, delete any entries in the follower’s log after that point, and send the follower all of the leader’s entries after that point. All of these actions happen in response to the consistency check performed by AppendEntries RPCs. The leader maintains a nextIndex for each follower, which is the index of the next log entry the leader will send to that follower. When a leader first comes to power, it initializes all nextIndex values to the index just after the last one in its log (11 in Figure 7). If a follower’s log is inconsistent with the leader’s, the AppendEntries consistency check will fail in the next AppendEntries RPC. After a rejection, the leader decrements nextIndex and retries the AppendEntries RPC. Eventually nextIndex will reach a point where the leader and follower logs match. When this happens, AppendEntries will succeed, which removes any conflicting entries in the follower’s log and appends entries from the leader’s log (if any). Once AppendEntries succeeds, the follower’s log is consistent with the leader’s, and it will remain that way for the rest of the term.

为了使跟随者的日志与自己的日志保持一致,领导者必须找到两个日志一致的最新日志条目,删除该点之后跟随者日志中的所有条目,并在该点之后将领导者的所有条目发送给跟随者。所有这些操作都是由AppendEntries RPCs的一致性检查引发执行的。领导者为每个跟随者都维护了一个nextIndex变量,它是领导者将要发送给改跟随者的下一个日志条目的索引。当一个领导者第一次掌权时,它会将所有跟随者的nextIndex初始化为最后一个日志条目的下一个索引(图7中的11)。如果跟随者与领导者的日志不一致,AppendEntries的一致性检查就会在下一次的Append Entries RPCs中返回失败。一次失败后,领导者就会将该跟随着的nextIndex减1,然后重新发送Append Entries RPCs,如此循环往复,直到找到一个领导者和跟随者的日志能通过AppendEntries的一致性检查的nextIndex值。此时,AppendEntries将成功执行,这将删除跟随者日志中任何冲突的条目,并复制领导者此索引之后的所有日志同步给跟随者。 一旦AppendEntries成功,追随者的日志与领导者的日志是一致的,并且在余下的任期内都将保持这种状态。

If desired, the protocol can be optimized to reduce the number of rejected AppendEntries RPCs. For example, when rejecting an AppendEntries request, the follower can include the term of the conflicting entry and the first index it stores for that term. With this information, the leader can decrement nextIndex to bypass all of the conflicting entries in that term; one AppendEntries RPC will be required for each term with conflicting entries, rather than one RPC per entry. In practice, we doubt this optimization is necessary, since failures happen infrequently and it is unlikely that there will be many inconsistent entries.

如果需要的话,可以对协议进行优化,以减少被拒绝的RPC的数量。例如,当拒绝AppendEntries RPCs时,跟随者可以将包括冲突条目的任期和此任期内存储的第一个条目返回给领导者。这样,领导者就可以将nextIndex直接减去所有冲突的条目最早的那个条目。一个任期内的日志条目冲突只需要一次AppendEntries RPCs就可以,而不需要像之前那样每个条目一次AppendEntries RPCs。但是在实际应用中,我们认为此优化是完全没有必要的,因为AppendEntries RPCs请求失败并不是经常发生,并且好像也不会有很多冲突的日志条目。

With this mechanism, a leader does not need to take any special actions to restore log consistency when it comes to power. It just begins normal operation, and the logs automatically converge in response to failures of the AppendEntries consistency check. A leader never overwrites or deletes entries in its own log (the Leader Append-Only Property in Figure 3).

通过这种机制,当一个领导者掌权时,不需要采取任何额外的措施来恢复日志一致性。它只需要执行正常的操作,日志就会随着AppendEntries的一致性检查自动收敛。领导者永远不会覆盖或删除自己日志中的条目(图3中的Leader Append Only属性)。

This log replication mechanism exhibits the desirable consensus properties described in Section 2: Raft can accept, replicate, and apply new log entries as long as a majority of the servers are up; in the normal case a new entry can be replicated with a single round of RPCs to a majority of the cluster; and a single slow follower will not impact performance.

这中日志复制机制展示了我们在第2节中描述的共识属性:Raft只要在大多数服务器正常运行的情况下就能执行日志条目的接收,复制和应用。正常情况下一次RPCs就能完成一个日志条目的复制,单个跟随者的操作延迟不影响整体性能。

5.4、安全性

The previous sections described how Raft elects leaders and replicates log entries. However, the mechanisms described so far are not quite sufficient to ensure that each state machine executes exactly the same commands in the same order. For example, a follower might be unavailable while the leader commits several log entries, then it could be elected leader and overwrite these entries with new ones; as a result, different state machines might execute different command sequences.

之前的章节描述了Raft如何进行领导选举和日志复制的。但是,到目前为止所描述的机制并不能很有效的保证每一个状态机以同样的顺序执行执行同样的命令。例如,在一个跟随者不可用的时候,领导者提交了一些日志条目,然后该跟随者恢复正常后被选举成了领导者,然后使用新的日志条目覆盖掉了之前的领导者提交了但没有被成功复制的那些条目。这样的话,不同服务器的状态机可能就执行了不同的命令序列。

This section completes the Raft algorithm by adding a restriction on which servers may be elected leader. The restriction ensures that the leader for any given term contains all of the entries committed in previous terms (the Leader Completeness Property from Figure 3). Given the election restriction, we then make the rules for commitment more precise. Finally, we present a proof sketch for the Leader Completeness Property and show how it leads to correct behavior of the replicated state machine.

这一章节对于可能会被选为领导者的服务器添加了一些限制。使得特定任期内的领导者能够包含之前任期内提交的日志条目(图3中的Leader Completion属性)。通过增加了这些选举限制,我们进一步细化了提交规则。最后,我们呈现了一个领导者完备性(Leader Completeness Property)的证明草图,并展示了它是如何指导状态机正确执行的。

5.4.1、选举限制

In any leader-based consensus algorithm, the leader must eventually store all of the committed log entries. In some consensus algorithms, such as Viewstamped Replication [22], a leader can be elected even if it doesn’t initially contain all of the committed entries. These algorithms contain additional mechanisms to identify the missing entries and transmit them to the new leader, either during the election process or shortly afterwards. Unfortunately, this results in considerable additional mechanism and complexity. Raft uses a simpler approach where it guarantees that all the committed entries from previous terms are present on each new leader from the moment of its election, without the need to transfer those entries to the leader. This means that log entries only flow in one direction, from leaders to followers, and leaders never overwrite existing entries in their logs.

在任何基于领导者的共识算法(leader-based consensus algorithm)中,领导者最终都必须保存着所有提交的日志条目。在一些共识算法中,比如 Viewstamped Replication[22],即使一开始没有包含所有提交的条目,也可以选出一个领导者。这些算法包含额外的机制来识别丢失的条目,并在选举过程中或之后不久将其传送给新的领导人。不幸的是,这带来了相当多的额外机制和复杂性。Raft使用一种简单的方法使得之前领导者提交的日志条目能够在一选举出新的领导者时就能完整的程现在领导者上,而不需要任何的传送。这就意味着,日志条目只会从领导者流向跟随者,领导者永远不会覆盖其日志中的现有条目。

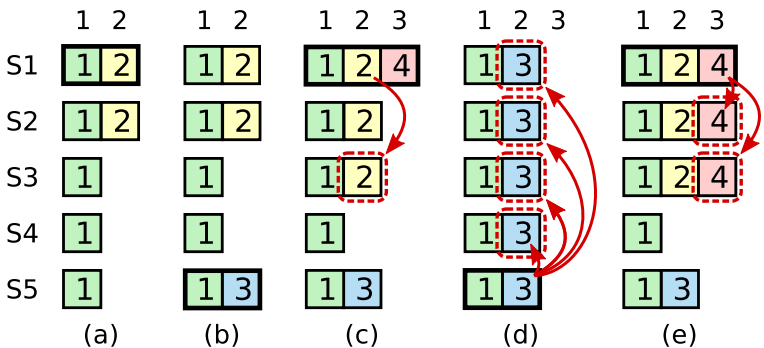

Figure 8: A time sequence showing why a leader cannot determine commitment using log entries from older terms. In (a) S1 is leader and partially replicates the log entry at index 2. In (b) S1 crashes; S5 is elected leader for term 3 with votes from S3, S4, and itself, and accepts a different entry at log index 2. In © S5 crashes; S1 restarts, is elected leader, and continues replication. At this point, the log entry from term 2 has been replicated on a majority of the servers, but it is not committed. If S1 crashes as in (d), S5 could be elected leader (with votes from S2, S3, and S4) and overwrite the entry with its own entry from term 3. However, if S1 replicates an entry from its current term on a majority of the servers before crashing, as in (e), then this entry is committed (S5 cannot win an election). At this point all preceding entries in the log are committed as well.

图 8:该时间序列显示了为什么领导者不能使用历史任期的日志条目来确定承诺(determine commitment)。 (a) S1 是领导者,部分复制了索引 2 处的日志条目。 (b) S1 崩溃,S5 被选为第 3 任期的领导者(来自S3、S4 和它自己的投票),并在日志索引 2 处接受不同的条目。 © S5 宕机, S1 重新启动,被选为领导者,并继续复制。 此时第 2 项的日志条目已在大多数服务器上复制,但尚未提交。 (d)S1再次宕机,则S5可以被选为领导(来自S2,S3和S4的投票)并从第 3 任期中覆盖其自身条目的条目。但是,如果S1在崩溃之前在大多数服务器上复制了其当前任期的条目,如(e)中所示,之后该条目被提交(则S5无法赢得选举)。此时,日志中所有前面的条目也将提交。

Raft uses the voting process to prevent a candidate from winning an election unless its log contains all committed entries. A candidate must contact a majority of the cluster in order to be elected, which means that every committed entry must be present in at least one of those servers. If the candidate’s log is at least as up-to-date as any other log in that majority (where “up-to-date” is defined precisely below), then it will hold all the committed entries. The RequestVote RPC implements this restriction: the RPC includes information about the candidate’s log, and the voter denies its vote if its own log is more up-to-date than that of the candidate.

Raft使用投票过程来阻止候选者赢得选举,除非它的日志包含所有提交的条目。候选者必须联系集群的大多数成员才能当选,这意味着每个提交的条目必须至少出现在其中一个服务器中。如果候选者的日志至少和大多数人的日志一样都是最新的(下面精确地定义了“最新”),那么它将保存所有提交的条目。RequestVote RPC实现了这个限制:RPC中包含了关于候选者日志的信息,如果投票者自己的日志比候选者的日志更新得多,投票者就拒绝投票。

Raft determines which of two logs is more up-to-date by comparing the index and term of the last entries in the logs. If the logs have last entries with different terms, then the log with the later term is more up-to-date. If the logs end with the same term, then whichever log is longer is more up-to-date.

Raft通过比较两个服务器上日志的最后一个日志条目的任期和索引来决定谁的日志时最新的。任期不同,则任期大的日志新。任期相同,则索引大的日志新。

5.4.2、提交前置任期的条目

As described in Section 5.3, a leader knows that an entry from its current term is committed once that entry is stored on a majority of the servers. If a leader crashes before committing an entry, future leaders will attempt to finish replicating the entry. However, a leader cannot immediately conclude that an entry from a previous term is committed once it is stored on a majority of servers. Figure 8 illustrates a situation where an old log entry is stored on a majority of servers, yet can still be overwritten by a future leader.

如第 5.3 节所述,一旦该条目存储在大多数服务器上,领导者就知道其当前任期中的条目已提交。 如果领导者在提交条目之前崩溃,未来的领导者将尝试完成复制该条目。 然而,领导者不能立即断定前一任期的条目是否已经存储在大多数服务器上并完成了提交。 图 8 中说明了一种场景,存在大多数服务器上的日志条目被新的领导者的日志给覆盖了。

Figure 9: If S1 (leader for term T) commits a new log entry from its term, and S5 is elected leader for a later term U, then there must be at least one server (S3) that accepted the log entry and also voted for S5.

图 9:如果 S1(任期 T 的领导者)在其任期中提交了一个新的日志条目,并且 S5 被选为以后任期 U 的领导者,那么必须至少有一个服务器(S3)接受该日志条目并投票给 S5。

To eliminate problems like the one in Figure 8, Raft never commits log entries from previous terms by counting replicas. Only log entries from the leader’s current term are committed by counting replicas; once an entry from the current term has been committed in this way, then all prior entries are committed indirectly because of the Log Matching Property. There are some situations where a leader could safely conclude that an older log entry is committed (for example, if that entry is stored on every server), but Raft takes a more conservative approach for simplicity.

为了消除图 8 中的这种问题,Raft 从来不会通过计算副本数来决定是否提交上一个任期的日志条目。 只有领导者当期的日志条目需要通过计算备份数来决定提交。一旦当前任期内的一个日志条目以这种方式被提交了,那么根据 Log Matching Property 的限制,所有之前的所有日志条目也就间接的被提交了。在某些情况下,领导者能够立即识别一个旧的日志条目是否被提交了(例如,如果该条目存储在每个服务器上),但是Raft为了简洁,选择了使用更加保守的方法。

Raft incurs this extra complexity in the commitment rules because log entries retain their original term numbers when a leader replicates entries from previous terms. In other consensus algorithms, if a new leader rereplicates entries from prior “terms,” it must do so with its new “term number.” Raft’s approach makes it easier to reason about log entries, since they maintain the same term number over time and across logs. In addition, new leaders in Raft send fewer log entries from previous terms than in other algorithms (other algorithms must send redundant log entries to renumber them before they can be committed).

Raft 在提交规则中会产生这种额外的复杂性,因为当领导者从以前的任期复制条目时,日志条目会保留其原始任期号。 在其他共识算法中,如果一个新的领导者从之前的“任期”中重新复制条目,它必须使用新的“任期号”这样做。 Raft 的方法使推理日志条目变得更容易,因为它们随着时间的推移和跨日志保持相同的术语编号。 此外,与其他算法相比,Raft 中的新领导者发送的之前任期中的日志条目更少(其他算法必须发送冗余日志条目来重新编号,然后才能提交)。

5.4.3、安全性论证

Given the complete Raft algorithm, we can now argue more precisely that the Leader Completeness Property holds (this argument is based on the safety proof; see Section 9.2). We assume that the Leader Completeness Property does not hold, then we prove a contradiction. Suppose the leader for term T (leaderT) commits a log entry from its term, but that log entry is not stored by the leader of some future term. Consider the smallest term U > T whose leader (leaderU) does not store the entry.

给出了完整的 Raft 算法,我们现在可以进一步的对领导者完备性(Leader Completeness Property)进行论证。(这个论证基于安全性证明,参见第 9.2 节)。 首先我们假设Leader Completeness Property 不成立,那么我们需要提出一个矛盾点。 假设任期为T的领导者T (Leader T) 提交了其任期内的日志条目,但是这个日志条目并没有被之后任期的领导者存储。 假设存在没有存储这条日志条目的领导者U(Leader U),其中任期U大于任期T。

- The committed entry must have been absent from leaderU’s log at the time of its election (leaders never delete or overwrite entries).

- leaderT replicated the entry on a majority of the cluster, and leaderU received votes from a majority of the cluster. Thus, at least one server (“the voter”) both accepted the entry from leaderT and voted for leaderU, as shown in Figure 9. The voter is key to reaching a contradiction.

- The voter must have accepted the committed entry from leaderT before voting for leaderU; otherwise it would have rejected the AppendEntries request from leaderT (its current term would have been higher than T).

- The voter still stored the entry when it voted for leaderU, since every intervening leader contained the entry (by assumption), leaders never remove entries, and followers only remove entries if they conflict with the leader.

- The voter granted its vote to leaderU, so leaderU’s log must have been as up-to-date as the voter’s. This leads to one of two contradictions.

- First, if the voter and leaderU shared the same last log term, then leaderU’s log must have been at least as long as the voter’s, so its log contained every entry in the voter’s log. This is a contradiction, since the voter contained the committed entry and leaderU was assumed not to.

- Otherwise, leaderU’s last log term must have been larger than the voter’s. Moreover, it was larger than T, since the voter’s last log term was at least T (it contains the committed entry from term T). The earlier leader that created leaderU’s last log entry must have contained the committed entry in its log (by assumption). Then, by the Log Matching Property, leaderU’s log must also contain the committed entry, which is a contradiction.

- This completes the contradiction. Thus, the leaders of all terms greater than T must contain all entries from term T that are committed in term T.

- The Log Matching Property guarantees that future leaders will also contain entries that are committed indirectly, such as index 2 in Figure 8(d).

- 在Leader U当选时,它的日志里面肯定没有这个已经被提交的日志条目。(领导者永远不会删除或覆盖条目)。

- LeaderT 已经将该日志条目复制给了集群中的大多数成员,LeaderU在选举时收到了集群大多数成员的投票。 因此,至少有一个服务器(”“投票者”)同时接受了来自 LeaderT 的日志条目并投票给了 LeaderU,如图 9 所示。投票者是达成矛盾的关键。

- 投票者在给LeaderU之前必然已经接受了LeaderT提交的日志条目,否则它将拒绝来自 LeaderT 的 AppendEntries 请求(拒绝的时候,其当前任期将高于 T)。

- 投票者在投票给 LeaderU 时还存储该条目,因为每个参与其中的领导者都包含该条目(假设),领导者从不删除条目,而跟随者仅在与领导者冲突时才会删除条目。

- 投票者把选票给了LeaderU,因此LeaderU的日志必须和投票者的日志一样都是最新的。 这导致了两个矛盾中的一个。

- 首先,如果投票者和LeaderU 的最后一个日志任期相同,那么LeaderU 的日志必须至少和投票者一样长,所以它的日志包含了投票者日志中的每一个条目。 这是一个矛盾,因为投票者包含了被提交的条目,而LeaderU 则没有。

- 否则,LeaderU 的最后一个日志任期必须大于投票者的。 进一步说,它大于 T,因为投票者的最后一个日志期限至少是 T(它包含来自期限 T 的提交条目)。假设,创建 LeaderU 的最后一个日志条目的较早的领导者的日志中必须包含已经提交的日志条目,那么,根据日志匹配属性,LeaderU 的日志也必须包含提交的条目,这是一个矛盾。

- 这就完成了矛盾。 因此,所有任期大于 T 的领导者必须包含任期 T 中已经提交的所有日志条目。

- Log Matching Property保证未来的领导者也将包含间接提交的条目,例如图 8(d) 中的索引 2。

Given the Leader Completeness Property, we can prove the State Machine Safety Property from Figure 3, which states that if a server has applied a log entry at a given index to its state machine, no other server will ever apply a different log entry for the same index. At the time a server applies a log entry to its state machine, its log must be identical to the leader’s log up through that entry and the entry must be committed. Now consider the lowest term in which any server applies a given log index; the Log Completeness Property guarantees that the leaders for all higher terms will store that same log entry, so servers that apply the index in later terms will apply the same value. Thus, the State Machine Safety Property holds.

依据领导者完整性属性(Leader Completeness Property),我们可以证明图 3 中的状态机安全属性(State Machine Safety Property),它指出如果服务器已将给定索引处的日志条目应用于其状态机,则没有其他服务器会为同一索引应用不同的日志条目。当服务器已经将日志条目应用于其状态机时,其日志必须与通过该条目成为领导者的日志相同,并且该日志条目必须已经被提交了。现在考虑任何服务器应用给定日志索引的最低期限; 日志完整性属性(Log Completeness Property)保证所有更高任期的领导者将存储相同的日志条目,因此在以后的任期中应用索引的服务器将应用相同的值。 因此,状态机安全属性(State Machine Safety Property)成立。

Finally, Raft requires servers to apply entries in log index order. Combined with the State Machine Safety Property, this means that all servers will apply exactly the same set of log entries to their state machines, in the same order.

最后,Raft 要求服务器按日志索引顺序应用条目。 结合状态机安全属性(State Machine Safety Property),这意味着所有服务器都将以相同的顺序将完全相同的日志条目集应用于其状态机。

5.5、跟随者和候选者宕机

Until this point we have focused on leader failures. Follower and candidate crashes are much simpler to handle than leader crashes, and they are both handled in the same way. If a follower or candidate crashes, then future RequestVote and AppendEntries RPCs sent to it will fail. Raft handles these failures by retrying indefinitely; if the crashed server restarts, then the RPC will complete successfully. If a server crashes after completing an RPC but before responding, then it will receive the same RPC again after it restarts. Raft RPCs are idempotent, so this causes no harm. For example, if a follower receives an AppendEntries request that includes log entries already present in its log, it ignores those entries in the new request.

到目前为止,我们的关注点都在领导者的失败上。 跟随者和候选者的失败相对来说,更容易进行处理,处理机制也与领导者相同。如果追随者或候选者发生了宕机,那么之后发送给它们的 RequestVote RPCs 和 AppendEntries RPCs 将失败。 Raft 通过无限重试来处理这些失败; 如果宕机的服务器重新启动,则 RPC 将成功完成请求。 当服务器接收处理完RPC请求,但是在回复之前宕机,那么它会在重新启动后再次收到相同的 RPC。 Raft RPC 是幂等的,所以这种情况并不会引发任何问题。 例如,如果一个跟随者收到一个 AppendEntries 请求,其中包括其日志中已经存在的日志条目,它会忽视这此请求。

5.6、时间和可用性

One of our requirements for Raft is that safety must not depend on timing: the system must not produce incorrect results just because some event happens more quickly or slowly than expected. However, availability (the ability of the system to respond to clients in a timely manner) must inevitably depend on timing. For example, if message exchanges take longer than the typical time between server crashes, candidates will not stay up long enough to win an election; without a steady leader, Raft cannot make progress.

我们对Raft的一个要求是,安全性不能依赖于时间:系统不能仅仅因为某些事件发生得比预期的快或慢而产生错误的结果。然而,可用性(系统及时响应客户机的能力)必然取决于时间。例如,由于服务器崩溃而导致信息交换的时间比通常情况下更长,候选者就无法长时间等待来赢得选举。如果没有一个稳定的领导者,Raft就不能正常的执行。

Leader election is the aspect of Raft where timing is most critical. Raft will be able to elect and maintain a steady leader as long as the system satisfies the following timing requirement:

领导者选举是 Raft 中时机最关键的方面。 只要系统满足以下时序要求,Raft 将能够选举和维护一个稳定的领导者:

- broadcastTime ≪ electionTimeout ≪ MTBF

- 广播时间 << 选举超时时间 << 平均故障间隔(MTBF,Mean Time Between Failures)

In this inequality broadcastTime is the average time it takes a server to send RPCs in parallel to every server in the cluster and receive their responses; electionTimeout is the election timeout described in Section 5.2; and MTBF is the average time between failures for a single server. The broadcast time should be an order of magnitude less than the election timeout so that leaders can reliably send the heartbeat messages required to keep followers from starting elections; given the randomized approach used for election timeouts, this inequality also makes split votes unlikely. The election timeout should be a few orders of magnitude less than MTBF so that the system makes steady progress. When the leader crashes, the system will be unavailable for roughly the election timeout; we would like this to represent only a small fraction of overall time.

在这个不等式中,广播时间(broadcastTime)是服务器向集群中的每个服务器并行发送 RPC 并接收它们的响应所花费的平均时间; 选举超时时间(electionTimeout)是第 5.2 节中描述的选举超时时间; 平均故障时间(MTBF,Mean Time Between Failures)是单个服务器的平均故障间隔时间。 广播时间应该比选举超时时间少一个数量级,这样领导者可以及时的发送心跳信息给跟随者以组织新的领导选举。通过使用随机的选举超时时间,分裂投票的情况也不大可能会出现。选举超时时间应该比 平均故障时间(MTBF) 小几个数量级,这样系统就能正常运行。 当领导者宕机时,系统将在选举超时时间(electionTimeout) 内不可用; 我们希望这仅占总时间的一小部分。

The broadcast time and MTBF are properties of the underlying system, while the election timeout is something we must choose. Raft’s RPCs typically require the recipient to persist information to stable storage, so the broadcast time may range from 0.5ms to 20ms, depending on storage technology. As a result, the election timeout is likely to be somewhere between 10ms and 500ms. Typical server MTBFs are several months or more, which easily satisfies the timing requirement.

广播时间(Broadcast Time)和 平均故障时间(MTBF,Mean Time Between Failures)是底层系统的属性,而选举超时时间是需要我们自己进行设置的。 Raft 的 RPCs 通常需要接收方将信息持久化到稳定的存储中,因此广播时间可能在 0.5 毫秒到 20 毫秒之间,具体取决于存储技术。 因此,选举超时很可能在 10 毫秒到 500 毫秒之间。 典型的服务器 MTBF 为几个月或更长时间,完全满足系统的时间因素要求。

6、集群成员变更

Up until now we have assumed that the cluster configuration (the set of servers participating in the consensus algorithm) is fixed. In practice, it will occasionally be necessary to change the configuration, for example to replace servers when they fail or to change the degree of replication. Although this can be done by taking the entire cluster off-line, updating configuration files, and then restarting the cluster, this would leave the cluster unavailable during the changeover. In addition, if there are any manual steps, they risk operator error. In order to avoid these issues, we decided to automate configuration changes and incorporate them into the Raft consensus algorithm.

到目前为止,我们假设集群配置(参与共识算法的服务器集)是固定的。 在实践中,有时需要更改配置,例如在服务器出现故障时更换服务器或更改复制的程度。 虽然这可以通过使整个集群下线、更改配置,然后重新启动集群来完成,但这会使集群在切换期间不可用。 另外,人为操作的因素也更容易引发系统错误。 为了避免这些问题,我们决定实现配置变更的自动化,并将其融合进共识算法中。

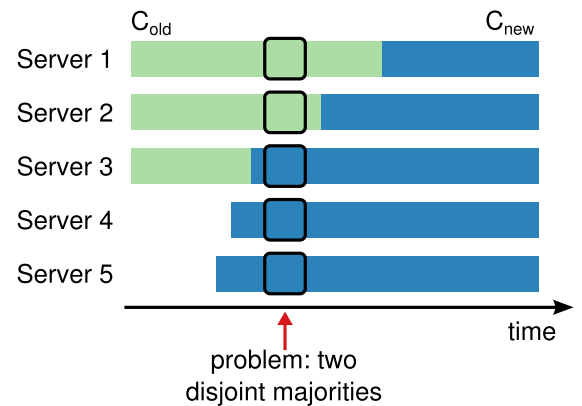

For the configuration change mechanism to be safe, there must be no point during the transition where it is possible for two leaders to be elected for the same term. Unfortunately, any approach where servers switch directly from the old configuration to the new configuration is unsafe. It isn’t possible to atomically switch all of the servers at once, so the cluster can potentially split into two independent majorities during the transition (see Figure 10).

为了保障配置变更机制的安全,在配置变更期间,不能存在同一任期内选举出两个领导者的情况。 不幸的是,任何服务器直接从旧配置切换到新配置的方法都是不安全的。 一次原子地切换所有服务器是不可能的,因此在转换过程中,集群极有可能出现裂脑现象(参见图 10)。

Figure 10: Switching directly from one configuration to another is unsafe because different servers will switch at different times. In this example, the cluster grows from three servers to five. Unfortunately, there is a point in time where two different leaders can be elected for the same term, one with a majority of the old configuration (Cold) and another with a majority of the new configuration (Cnew).

图 10:由于不同的服务器的切换时间不一样,因此直接从一种配置切换到另一种配置是不安全的。 在本例中,集群从三台服务器增加到五台。 不幸的是,有一个时间点上会出现同一个任期内可以选举出两个领导者的情况,一个领导者被拥有旧配置(Cold)的成员选举出,另一个则被拥有新配置(Cnew)的成员选举出。(在图例中出现问题的时刻,Server1可以通过自身以及Server2的投票拿到2/3比例的选票?? 而赢得选举,成为领导者;并且此时Server5可以通过自身和Server3以及Server4的投票 拿到3/5比例的选票?? 赢得选举,最终存在两个领导者。)

In order to ensure safety, configuration changes must use a two-phase approach. There are a variety of ways to implement the two phases. For example, some systems (e.g., [22]) use the first phase to disable the old configuration so it cannot process client requests; then the second phase enables the new configuration. In Raft the cluster first switches to a transitional configuration we call joint consensus; once the joint consensus has been committed, the system then transitions to the new configuration. The joint consensus combines both the old and new configurations:

为了确保安全,配置更改必须使用两阶段法。 有多种方法可以实现这两个阶段。 例如,某些系统(例如 [22]) 在第一阶段禁用旧配置,使其无法处理客户端请求; 然后在第二阶段启用新配置。 在 Raft 中,集群的配置会首先进入到我们称之为联合共识(joint consensus)的过渡配置; 一旦达成了联合共识,系统就会转换到新的配置。 联合共识中结合了新旧配置:

- Log entries are replicated to all servers in both configurations.

- Any server from either configuration may serve as leader

- Agreement (for elections and entry commitment) requires separate majorities from both the old and new configurations.

- 日志条目会被复制到集群中两种配置下的所有服务器上。

- 任一配置中的任何服务器都可以作为领导者。

- 选举和日志条目提交的商定需要按照新旧配置中的大多数服务器原则来要求。

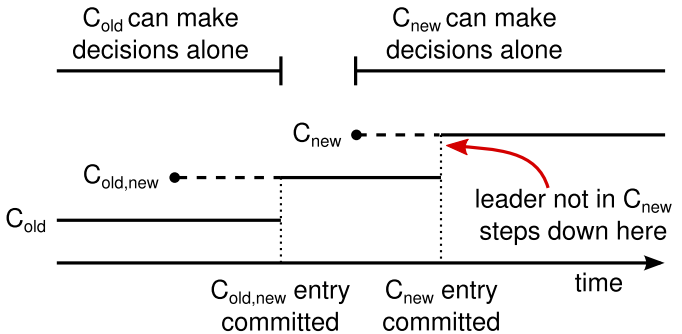

Figure 11: Timeline for a configuration change. Dashed lines show configuration entries that have been created but not committed, and solid lines show the latest committed configuration entry. The leader first creates the Cold,new configuration entry in its log and commits it to Cold,new (a majority of Cold and a majority of Cnew). Then it creates the Cnew entry and commits it to a majority of Cnew. There is no point in time in which Cold and Cnew can both make decisions independently.

图 11:配置更改的时间表。 虚线代表已创建但未提交的配置条目,实线代表最新提交的配置条目。 领导者首先在其日志中创建 Cold,new 配置条目并将其提交到 Cold,new(大多数 Cold 和大多数 Cnew)。 然后它创建 Cnew 条目并将其提交给大多数 Cnew。 Cold 和 Cnew 没有时间窗口可以独立做出决定。

The joint consensus allows individual servers to transition between configurations at different times without compromising safety. Furthermore, joint consensus allows the cluster to continue servicing client requests throughout the configuration change.

联合共识( joint consensus)允许单个服务器在不影响安全性的基础上,在不同的特定时刻进行不同配置的转换。 此外,联合共识允许集群在整个配置更改期间继续为客户端请求提供服务。

Cluster configurations are stored and communicated using special entries in the replicated log; Figure 11 illustrates the configuration change process. When the leader receives a request to change the configuration from Cold to Cnew, it stores the configuration for joint consensus (Cold,new in the figure) as a log entry and replicates that entry using the mechanisms described previously. Once a given server adds the new configuration entry to its log, it uses that configuration for all future decisions (a server always uses the latest configuration in its log, regardless of whether the entry is committed). This means that the leader will use the rules of Cold,new to determine when the log entry for Cold,new is committed. If the leader crashes, a new leader may be chosen under either Cold or Cold,new, depending on whether the winning candidate has received Cold,new. In any case, Cnew cannot make unilateral decisions during this period.

集群配置是通过使用复制日志中的特殊条目进行存储和通信; 图 11 说明了配置更改过程。 当领导者收到将配置从 Cold 更改为 Cnew 的请求时,它将联合共识的配置(图中的Cold,NEW)存储为日志条目,并按照前面所描述的机制将该条目复制给其他服务器。一旦某个服务器将收到的 Cold,new 配置日志条目并添加到自身的日志中,那么之后其所有的决策都将以此配置 Cold,new 为依据(服务器总是以日志中最新的配置为依据进行决策,无论该条目是否已提交)。 这意味着领导者将使用 Cold,new 的规则来确定 Cold,new 的日志条目何时被提交。 如果领导者发生了宕机,新的领导者将在旧配置 Cold或者联合配置 Cold,new 的机器中选举出来。这取决于获胜的候选者是否收到了 Cold,new。无论如何,Cnew在此期间不能单方面做出决定。

Once Cold,new has been committed, neitherCold norCnew can make decisions without approval of the other, and the Leader Completeness Property ensures that only servers with the Cold,new log entry can be elected as leader. It is now safe for the leader to create a log entry describing Cnew and replicate it to the cluster. Again, this configuration will take effect on each server as soon as it is seen. When the new configuration has been committed under the rules of Cnew, the old configuration is irrelevant and servers not in the new configuration can be shut down. As shown in Figure 11, there is no time when Cold and Cnew can both make unilateral decisions; this guarantees safety.

一旦 Cold,new 被提交后,具有Cold或者Cnew的服务器将不能再没有其它服务器允许的情况下单独做出任何决策,并且Leader Completeness Property 确保只有具有Cold,new 日志条目的服务器才能被选举为领导者。 此时,领导者可以安全地创建一个描述 Cnew 的日志条目并将其复制到集群的其他服务器中。 同样,当复制的服务器收到配置条目后就会立刻生效。当新的配置被提交后,旧的配置就变得无关紧要了,并且没有新配置的服务器可以被关闭了。 如图 11 所示,Cold 和 Cnew 没有时机能够单独做出决策, 这保证了安全。

There are three more issues to address for reconfiguration. The first issue is that new servers may not initially store any log entries. If they are added to the cluster in this state, it could take quite a while for them to catch up, during which time it might not be possible to commit new log entries. In order to avoid availability gaps, Raft introduces an additional phase before the configuration change, in which the new servers join the cluster as non-voting members (the leader replicates log entries to them, but they are not considered for majorities). Once the new servers have caught up with the rest of the cluster, the reconfiguration can proceed as described above.

在配置转换期间存在着三方面的问题,第一个就是新的服务器初始化启动的时候不包含任何日志条目,当它们加入集群中时,需要花费相当长的时间同步到最新的状态,在此期间,它将不能提交任何日志条目。为了避免可用性断层,Raft 在配置更改之前引入了一个额外的阶段,在这个阶段,新服务器作为非投票成员(none-voting)加入集群(领导者将日志条目复制给它们,但它们不纳入大多数考虑的范围)。当新的服务器同步到最新的状态后,就可以执行正常的配置转换过程了。

The second issue is that the cluster leader may not be part of the new configuration. In this case, the leader steps down (returns to follower state) once it has committed the Cnew log entry. This means that there will be a period of time (while it is committingCnew) when the leader is managing a cluster that does not include itself; it replicates log entries but does not count itself in majorities. The leader transition occurs when Cnew is committed because this is the first point when the new configuration can operate independently (it will always be possible to choose a leader from Cnew). Before this point, it may be the case that only a server from Cold can be elected leader.

第二个问题是集群领导者可能是没有新配置的那一部分。 在这种情况下,一旦提交了Cnew配置,领导者就会被转换成跟随者。这就意味着会有一段时间领导者管理着一个不包含自己的集群。它复制日志条目,但是却将自身排除在大多数机器之外。当Cnew被提交时会发生领导者转换,因为这个是新配置可以独立运行的第一个时刻(总是可以从 Cnew 中选择领导者)。在此之前,只有处于Cold的服务器才可以被选举为领导者。

The third issue is that removed servers (those not in Cnew) can disrupt the cluster. These servers will not receive heartbeats, so they will time out and start new elections. They will then send RequestVote RPCs with new term numbers, and this will cause the current leader to revert to follower state. A new leader will eventually be elected, but the removed servers will time out again and the process will repeat, resulting in poor availability.

第三个问题是无关的服务器(不在Cnew中的服务器)可能会破坏集群。 因为这些服务器不会收到心跳请求,所以它们就会产生超时并启动新一轮的选举。然后它们发送带有新的任期号的RequestVote RPCs,这就会导致当前的领导者接收到请求后转换到跟随者状态,最终会选举出一个新的领导者。但是那些无关的的服务器会再次超时,如此循环往复,最终会导致系统可用性的大大降低。

To prevent this problem, servers disregard RequestVote RPCs when they believe a current leader exists. Specifically, if a server receives a RequestVote RPC within the minimum election timeout of hearing from a current leader, it does not update its term or grant its vote. This does not affect normal elections, where each server waits at least a minimum election timeout before starting an election. However, it helps avoid disruptions from removed servers: if a leader is able to get heartbeats to its cluster, then it will not be deposed by larger term numbers.

为了避免这样的问题发生,服务器在认为当前领导者存在时会忽略 RequestVote RPC。具体来说,如果服务器在听取当前领导者的最小选举超时内收到 RequestVote RPC,则不会更新其任期或授予其投票权。这不会影响正常选举,其中每个服务器在开始选举之前至少等待最小选举超时。 然而,它有助于避免被移除的服务器造成的扰乱:如果领导者能够发送心跳给集群,那么它就不会被更大的任期号废黜。

7、日志压缩

Raft’s log grows during normal operation to incorporate more client requests, but in a practical system, it cannot grow without bound. As the log grows longer, it occupies more space and takes more time to replay. This will eventually cause availability problems without some mechanism to discard obsolete information that has accumulated in the log.

Raft日志会伴随着系统的日常运行持续增长。但在实际应用中,我们不能让它无限制的增长下去。日志越长,占用的存储空间越多,也将耗费状态机更多时间去重放日志条目。我们需要适当的机制来处理掉日志中的过期的信息,避免其影响系统的可用性。

Snapshotting is the simplest approach to compaction. In snapshotting, the entire current system state is written to a snapshot on stable storage, then the entire log up to that point is discarded. Snapshotting is used in Chubby and ZooKeeper, and the remainder of this section describes snapshotting in Raft.

快照是最简单的压缩方法。通过快照将某一时刻系统的当前状态写入快照文件,保存到磁盘,然后将这一时刻之前的所有日志丢弃。 Chubby 和 ZooKeeper 都使用了快照技术,本节的其余部分描述 Raft 中的快照。

Incremental approaches to compaction, such as log cleaning [36] and log-structured merge trees [30, 5], are also possible. These operate on a fraction of the data at once, so they spread the load of compaction more evenly over time. They first select a region of data that has accumulated many deleted and overwritten objects, then they rewrite the live objects from that region more compactly and free the region. This requires significant additional mechanism and complexity compared to snapshotting, which simplifies the problem by always operating on the entire data set. While log cleaning would require modifications to Raft, state machines can implement LSM trees using the same interface as snapshotting.

渐进式压缩方法,例如日志清理 [36] 和日志结构合并树 [30, 5]。 它们一次对一小部分数据进行操作,因此它们会随着时间的推移更均匀地分布压缩负载。 他们首先选择一个已经积累了许多已删除和覆盖对象的数据区域,然后他们更紧凑地重写该区域中的活动对象并释放该区域。 与快照相比,这需要大量额外的机制和复杂性,这通过始终对整个数据集进行操作来简化问题。 虽然日志清理需要对 Raft 进行修改,但状态机可以使用与快照相同的接口来实现 LSM 树。

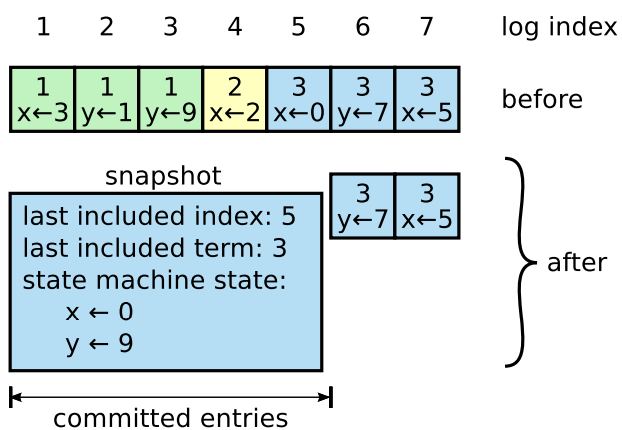

Figure 12 shows the basic idea of snapshotting in Raft. Each server takes snapshots independently, covering just the committed entries in its log. Most of the work consists of the state machine writing its current state to the snapshot. Raft also includes a small amount of metadata in the snapshot: the last included index is the index of the last entry in the log that the snapshot replaces (the last entry the state machine had applied), and the last included term is the term of this entry. These are preserved to support the AppendEntries consistency check for the first log entry following the snapshot, since that entry needs a previous log index and term. To enable cluster membership changes (Section 6), the snapshot also includes the latest configuration in the log as of last included index. Once a server completes writing a snapshot, it may delete all log entries up through the last included index, as well as any prior snapshot.

图 12 展示了 Raft 中快照的基本思想。 各个服务器独立的对已提交的日志条目进行日志快照。主要的工作是由状态机将它当前的状态写入快照文件来完成。Raft也保留了一些元信息在快照中:last included index代表状态机最后应用的日志条目索引,last included term则是指这一条目的任期。因为日志条目需要包含preLogIndex和preLogTerm这两个属性以应对AppendEntries的一致性检查。为了支持集群成员变更(第 6 节),快照文件中也在last included index配置前包含了最新的配置条目。一旦服务器完成写入快照,他就会将last include index之前的所有日志条目都删除掉,以及任何先前的快照。

Figure 12: A server replaces the committed entries in its log (indexes 1 through 5) with a new snapshot, which stores just the current state (variables x and y in this example). The snapshot’s last included index and term serve to position the snapshot in the log preceding entry 6.

图 12:服务器用新快照替换其日志中已提交的条目(索引 1 到 5),该快照仅存储当前状态(在本例中为变量 x 和 y)。 快照最后包含的索引和术语用于将快照定位在条目 6 之前的日志中。

Although servers normally take snapshots independently, the leader must occasionally send snapshots to followers that lag behind. This happens when the leader has already discarded the next log entry that it needs to send to a follower. Fortunately, this situation is unlikely in normal operation: a follower that has kept up with the leader would already have this entry. However, an exceptionally slow follower or a new server joining the cluster (Section 6) would not. The way to bring such a follower up-to-date is for the leader to send it a snapshot over the network.

尽管服务器通常独立拍摄快照,但在领导者必须偶尔向落后的跟随者发送快照,这种情况通常是由于领导者可能会丢弃了它需要发送给跟随者的下一个日志条目。 幸运的是,这种情况在正常操作中不太可能发生:和领导者保持同步的跟随者拥有着领导者的所有日志,但是,落后非常大的跟随着或者刚加入集群的服务器(第6节)却并非如此。处理此类跟随者的机制就是领导者发送日志快照来进行同步。

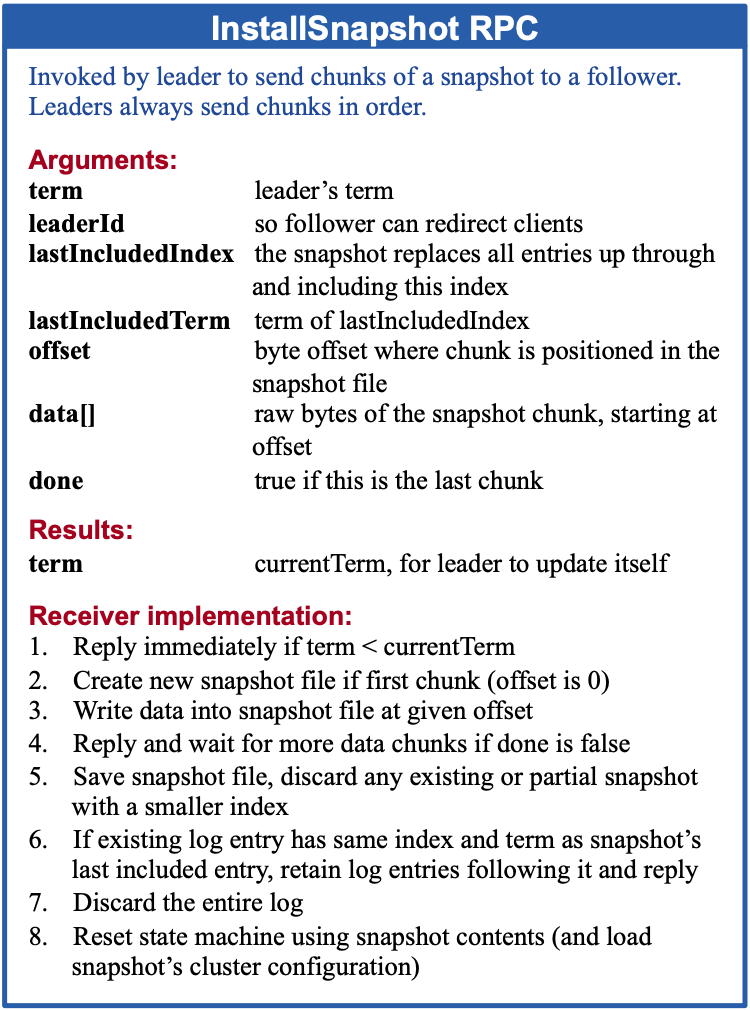

Figure 13: A summary of the InstallSnapshot RPC. Snapshots are split into chunks for transmission; this gives the follower a sign of life with each chunk, so it can reset its election timer.

图 13:InstallSnapshot RPC 的摘要。 快照被分成块进行传输; 这为追随者提供了每个块的生命迹象,因此它可以重置其选举计时器。

The leader uses a new RPC called InstallSnapshot to send snapshots to followers that are too far behind; see Figure 13. When a follower receives a snapshot with this RPC, it must decide what to do with its existing log entries. Usually the snapshot will contain new information not already in the recipient’s log. In this case, the follower discards its entire log; it is all superseded by the snapshot and may possibly have uncommitted entries that conflict with the snapshot. If instead the follower receives a snapshot that describes a prefix of its log (due to retransmission or by mistake), then log entries covered by the snapshot are deleted but entries following the snapshot are still valid and must be retained.

领导者使用一个名为 InstallSnapshot 的新 RPC 向落后较大的追随者发送快照; 请参见图 13。当跟随者收到带有此 RPC 的快照时,它必须决定如何处理其现有的日志条目。 通常,快照将包含收件人日志中尚未包含的新信息。 在这种情况下,跟随者会丢弃其整个日志(可能包含未提交的和和快照中有冲突的条目),然后替换为快照中的日志条目。相反,如果跟随者收到的快照中包含的日志条目是其自身日志之前的部分的条目(因为重传或其他错误),那么就会将快照覆盖的自身日志条目删除掉,但是这之后的日志条目任然有效,需要保留下来。

This snapshotting approach departs from Raft’s strong leader principle, since followers can take snapshots without the knowledge of the leader. However, we think this departure is justified. While having a leader helps avoid conflicting decisions in reaching consensus, consensus has already been reached when snapshotting, so no decisions conflict. Data still only flows from leaders to followers, just followers can now reorganize their data.

这种快照方法与 Raft 的强领导原则背道而驰,因为跟随者可以在领导者不知情的情况下拍摄快照。 然而,我们认为这种背道而驰是合理的。 虽然拥有领导者有助于在达成共识时避免冲突的决策,但在快照时已经达成共识,因此没有决策冲突。 数据仍然只从领导者流向跟随者,现在只是跟随者可以重组他们自己的数据。

We considered an alternative leader-based approach in which only the leader would create a snapshot, then it would send this snapshot to each of its followers. However, this has two disadvantages. First, sending the snapshot to each follower would waste network bandwidth and slow the snapshotting process. Each follower already has the information needed to produce its own snapshots, and it is typically much cheaper for a server to produce a snapshot from its local state than it is to send and receive one over the network. Second, the leader’s implementation would be more complex. For example, the leader would need to send snapshots to followers in parallel with replicating new log entries to them, so as not to block new client requests.

我们考虑了另一种的基于领导者的方法(leader-based approach),其中只有领导者会创建一个快照,然后将这个快照发送给它的每个追随者。 但是这种方法有两个缺点。 首先,将快照发送给每个跟随者会浪费网络带宽并拖慢整个的快照过程。 每个跟随者已经拥有了生成自己的快照所需的信息,并且服务器从其本地状态生成快照通常比通过网络发送和接收快照成本更低。 其次,领导者的实现会变得更加复杂, 例如,领导者需要在向跟随者发送快照的同时发送新的日志条目,并且不能阻塞客户端的请求。

There are two more issues that impact snapshotting performance. First, servers must decide when to snapshot. If a server snapshots too often, it wastes disk bandwidth and energy; if it snapshots too infrequently, it risks exhausting its storage capacity, and it increases the time required to replay the log during restarts. One simple strategy is to take a snapshot when the log reaches a fixed size in bytes. If this size is set to be significantly larger than the expected size of a snapshot, then the disk bandwidth overhead for snapshotting will be small.

还有两个问题会影响快照的性能。 首先,服务器必须决定何时进行快照。 如果服务器快照过于频繁,则会浪费磁盘带宽和能源; 如果快照不频繁,则有可能会耗尽其存储容量,并且会增加重新启动期间重放日志所需的时间。 一种简单的策略是在日志达到固定大小(以字节为单位)时拍摄快照。 如果将此大小设置为明显大于快照的预期大小,则快照的磁盘带宽开销将很小。

The second performance issue is that writing a snapshot can take a significant amount of time, and we do not want this to delay normal operations. The solution is to use copy-on-write techniques so that new updates can be accepted without impacting the snapshot being written. For example, state machines built with functional data structures naturally support this. Alternatively, the operating system’s copy-on-write support (e.g., fork on Linux) can be used to create an in-memory snapshot of the entire state machine (our implementation uses this approach).

第二个性能问题是写入快照可能需要大量时间,我们不希望这影响正常的系统运行,我们可以采用Cow(copy-on-write)机制,这样就可以在不影响正在写入的快照的情况下接受新的更新。例如,基于功能性结构数据的状态机(state machines built with functional data structures)就天然的支持这种特性。或者我们可以使用操作系统的copy-on-write机制(例如,Linux 上的 fork)来创建状态机的内存快照(我们的实现使用这种方法)。

8、客户端交互

This section describes how clients interact with Raft, including how clients find the cluster leader and how Raft supports linearizable semantics [10]. These issues apply to all consensus-based systems, and Raft’s solutions are similar to other systems.

本节描述客户端如何与 Raft 交互,包括客户端如何找到集群领导者以及 Raft 如何支持线性化语义(linearizable semantics)[10]。 这些问题适用于所有基于共识算法的系统,Raft 的解决方案与其他系统大体相同。